Workplace AI Tools Create New Privacy Battleground

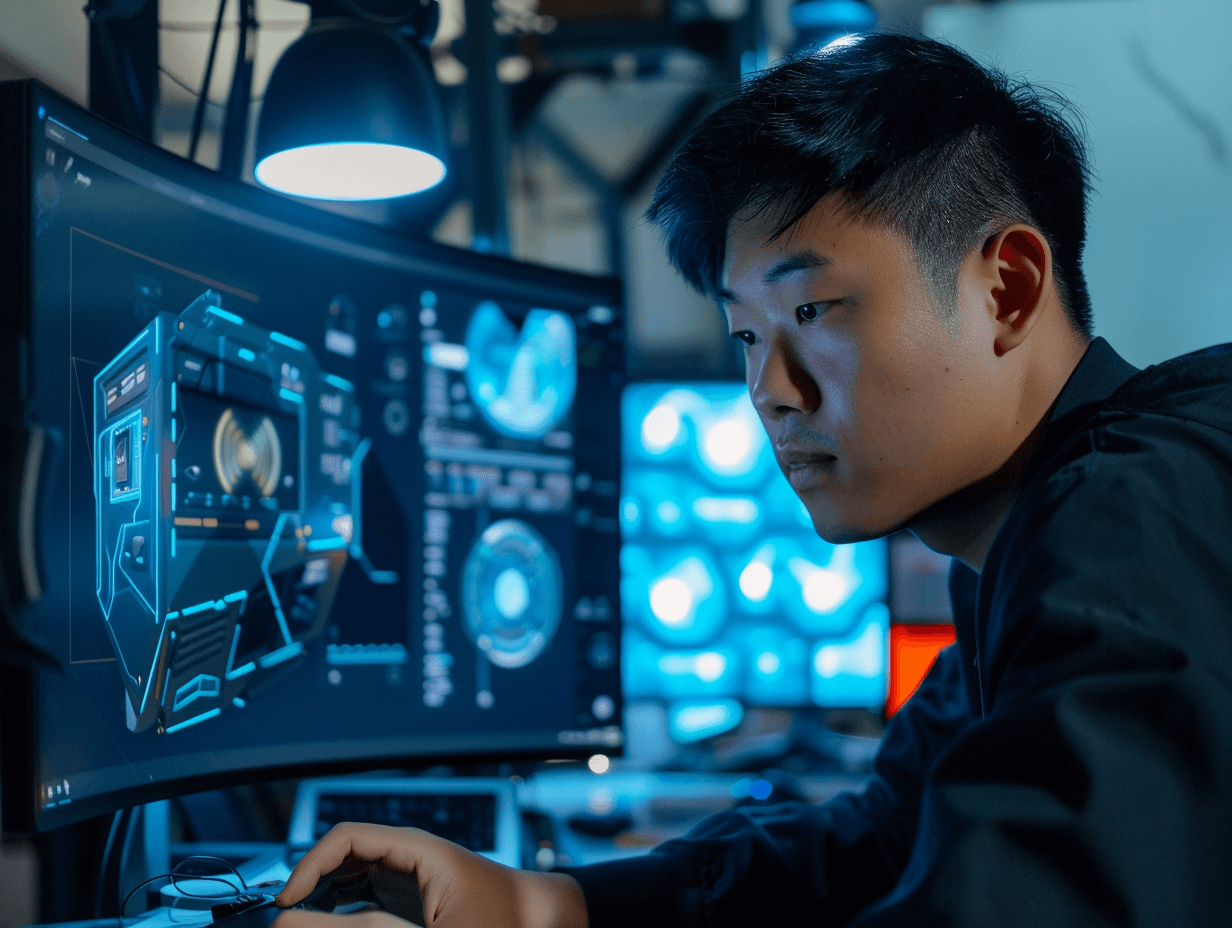

Generative AI tools like OpenAI's ChatGPT and Microsoft's Copilot are transforming workplace productivity, but they're also opening unprecedented privacy and security vulnerabilities. From inadvertent data exposure to potential surveillance overreach, businesses across Asia are grappling with risks that traditional cybersecurity frameworks weren't designed to address.

The stakes couldn't be higher. As organisations rush to integrate AI capabilities, they're discovering that these powerful tools can become digital Trojan horses, potentially exposing sensitive corporate data or enabling new forms of employee monitoring.

High-Profile Bans Signal Growing Alarm

The US House of Representatives has banned Microsoft's Copilot among staff members due to risks of leaking House data to non-House approved cloud services. Gartner has similarly cautioned that using Copilot for Microsoft 365 exposes organisations to significant internal and external data exposure risks.

Privacy advocates dubbed Microsoft's new Recall tool a potential "privacy nightmare" after learning it takes screenshots of users' laptops every few seconds. The UK's Information Commissioner's Office is now seeking detailed safety information from Microsoft before the tool launches in Copilot+ PCs.

Similar concerns are mounting over OpenAI's ChatGPT, which has demonstrated screenshotting capabilities in its upcoming macOS app. Privacy experts warn this could result in the capture of sensitive corporate information without users' explicit awareness.

By The Numbers

- 78% of enterprise AI implementations lack proper data governance controls, according to recent industry surveys

- Data breaches involving AI tools cost companies an average of $4.88 million globally in 2024

- Microsoft Copilot for 365 is deployed across over 1 million organisations worldwide

- Screenshot-based AI monitoring tools capture data every 3-5 seconds during active use

- 65% of Asian businesses report inadequate AI privacy training for employees

The Data Sponge Problem

Most generative AI systems function as "essentially big sponges," according to Camden Woollven, group head of AI at risk management firm GRC International Group. They absorb vast amounts of information from the internet to train their language models, creating unprecedented data aggregation risks.

"If an attacker managed to gain access to the large language model that powers a company's AI tools, they could siphon off sensitive data, plant false or misleading outputs, or use the AI to spread malware," warns Camden Woollven, Group Head of AI, GRC International Group.

The threat extends beyond traditional hacking. AI browsers face deep security flaws that researchers have recently exposed, demonstrating how quickly new AI technologies can introduce unforeseen vulnerabilities.

Phil Robinson, principal consultant at security consultancy Prism Infosec, notes that even "proprietary" AI offerings broadly deemed safe for work, such as Microsoft Copilot, are creating increasing numbers of potential security issues that organisations aren't prepared to handle.

Surveillance Concerns Mount

Beyond data exposure, AI tools are raising fresh concerns about employee monitoring. While Microsoft states that snapshots taken by its Recall feature stay locally on users' PCs under their control, the potential for workplace surveillance applications is evident.

"It's not difficult to imagine this technology being used for employee monitoring. The capability exists, and that creates inherent privacy risks regardless of stated intentions," observes Steve Elcock, CEO and Founder, Elementsuite.

This concern extends across Asia, where AI in the workplace impacts young tech enthusiasts differently than more experienced workers, potentially creating generational divides in privacy expectations.

The following table illustrates the evolving risk landscape:

| Risk Category | Traditional IT | AI-Enhanced Systems | Mitigation Complexity |

|---|---|---|---|

| Data Exposure | Controlled access points | Continuous data ingestion | High |

| Employee Monitoring | Limited to specific tools | Comprehensive activity capture | Very High |

| External Threats | Perimeter-based defence | AI model manipulation | Extreme |

| Compliance | Established frameworks | Evolving regulations | Medium |

Practical Protection Strategies

Lisa Avvocato, vice president of marketing and community at data firm Sama, emphasises that businesses and employees should avoid putting confidential information into prompts for publicly available tools such as ChatGPT or Google's Gemini.

When crafting prompts, specificity becomes the enemy of security:

- Ask "Write a proposal template for budget expenditure" rather than sharing actual budget details

- Request generic code templates instead of uploading proprietary algorithms

- Use hypothetical scenarios rather than real client names or project details

- Validate all AI-generated content by requesting references and sources

- Apply the "least privilege" principle, ensuring users only access necessary information

- Regularly audit AI tool usage across departments

- Implement clear policies about which AI tools are approved for business use

Understanding how digital agents will transform the future of work helps organisations prepare comprehensive governance frameworks that account for evolving AI capabilities.

Microsoft emphasises that Copilot needs correct configuration with least privilege principles applied. Robinson from Prism Infosec stresses that organisations must lay proper groundwork rather than simply trusting the technology.

Vendor Assurances vs Reality

Tech giants are pushing back against privacy concerns with detailed security commitments. Microsoft outlines security and privacy considerations in its Recall product documentation, while Google maintains that generative AI in Workspace "does not change our foundational privacy protections."

OpenAI offers enterprise versions with additional controls and states its models don't learn from usage by default. The company provides self-service tools to access, export, and delete personal information, plus opt-out capabilities for model improvement.

However, the gap between vendor promises and workplace reality remains significant, particularly as uncontrolled AI becomes a growing threat to businesses across the region.

What constitutes sensitive data in AI workplace contexts?

Any information that could identify individuals, reveal business strategies, expose financial details, or compromise competitive advantages. This includes client communications, internal documents, employee records, and proprietary processes that weren't intended for external sharing or analysis.

How can employees identify if their AI tools are monitoring them?

Look for screenshot notifications, unusual system permissions, or privacy policies mentioning data collection. Check if AI tools request access to cameras, microphones, or screen recording. Review workplace policies about monitoring and ask IT departments directly about AI surveillance capabilities.

What should companies do if they discover AI data exposure?

Immediately assess the scope of exposed information, notify affected stakeholders, and implement containment measures. Document the incident for compliance purposes, review AI tool permissions, and strengthen data governance policies to prevent future exposures while considering legal obligations.

Are enterprise AI versions actually safer than consumer tools?

Enterprise versions typically offer better data controls, audit trails, and compliance features, but they're not inherently immune to privacy risks. They require proper configuration, ongoing monitoring, and clear governance policies to realise security benefits over consumer alternatives.

How do Asian privacy regulations affect workplace AI adoption?

Asian countries are implementing increasingly strict AI governance frameworks that require explicit consent, data minimisation, and transparency. Companies must navigate varying national requirements while ensuring AI tools comply with local privacy laws and cultural expectations about workplace monitoring.

As AI systems become more sophisticated and omnipresent in Asian workplaces, these privacy and security challenges will only intensify. The key is treating AI like any other third-party service: don't share anything you wouldn't want publicly broadcasted. With proper governance frameworks and employee education, businesses can harness AI's productivity benefits while protecting sensitive information and respecting privacy rights.

How is your organisation balancing AI productivity gains against privacy risks? Drop your take in the comments below.

YOUR TAKE

We cover the story. You tell us what it means on the ground.

What did you think?

Related Articles

View more

Singapore Swallows 92% of Southeast Asia's Startup Capital

One city-state now captures 92% of SEA venture capital as seed funding outside Singapore collapses 57%.

China's Humanoid Robots Are Leaving the Lab and Entering the Factory

Over 150 Chinese startups race to build factory-ready humanoid robots, backed by state funding and a dominant component supply chain.

AI Is Powering ASEAN's Trillion-Dollar Clean Energy Bet

ASEAN grids are getting smarter as AI takes over renewable energy forecasting and dispatch.

Share your thoughts

Join 3 readers in the discussion below

This is a developing story

We're tracking this across Asia-Pacific and may update with new developments, follow-ups and regional context.