Security researchers are sounding the alarm on AI browsers , and the findings are deeply unsettling

Artificial intelligence has been woven into the fabric of modern web browsing at remarkable speed. From Microsoft's Copilot-infused Edge to Google's AI Overviews baked into Chrome, and a growing roster of challenger browsers emerging from Asian tech hubs, the race to make browsing smarter has arguably outpaced the race to make it safer. Now, security researchers are calling time on that imbalance , and the findings are difficult to dismiss.

New research has exposed serious structural vulnerabilities in AI-powered browsers, spanning prompt injection attacks, data leakage pathways, and concerning interactions between AI agents and live web content. The implications stretch well beyond individual privacy. In a region as digitally active as Asia-Pacific, where hundreds of millions of users increasingly rely on AI-enhanced tools for everything from banking to government services, these flaws carry systemic risk.

By The Numbers

- AI-integrated browsers now account for a rapidly growing share of browser usage across Asia-Pacific, with Microsoft Edge and Google Chrome alone commanding over 70% of the desktop market in key markets like Japan and South Korea.

- Prompt injection attacks , one of the primary attack vectors identified by researchers , have increased significantly as AI assistants become embedded in everyday tools, with security firms documenting a sharp rise in documented cases through 2024 and into 2025.

- Singapore, Japan, and South Korea all have active data protection legislation (PDPA, APPI, and PIPA respectively) that could expose browser vendors to regulatory action if AI-related data breaches are confirmed.

- Google and Microsoft collectively invest billions annually in browser security, yet researchers argue AI integration has introduced an entirely new attack surface that existing security frameworks were not designed to address.

- Emerging Asian browser developers , including those backed by Chinese and South Korean tech conglomerates , are rapidly expanding AI feature sets, often with less mature security review processes than their Western counterparts.

What the Research Actually Found

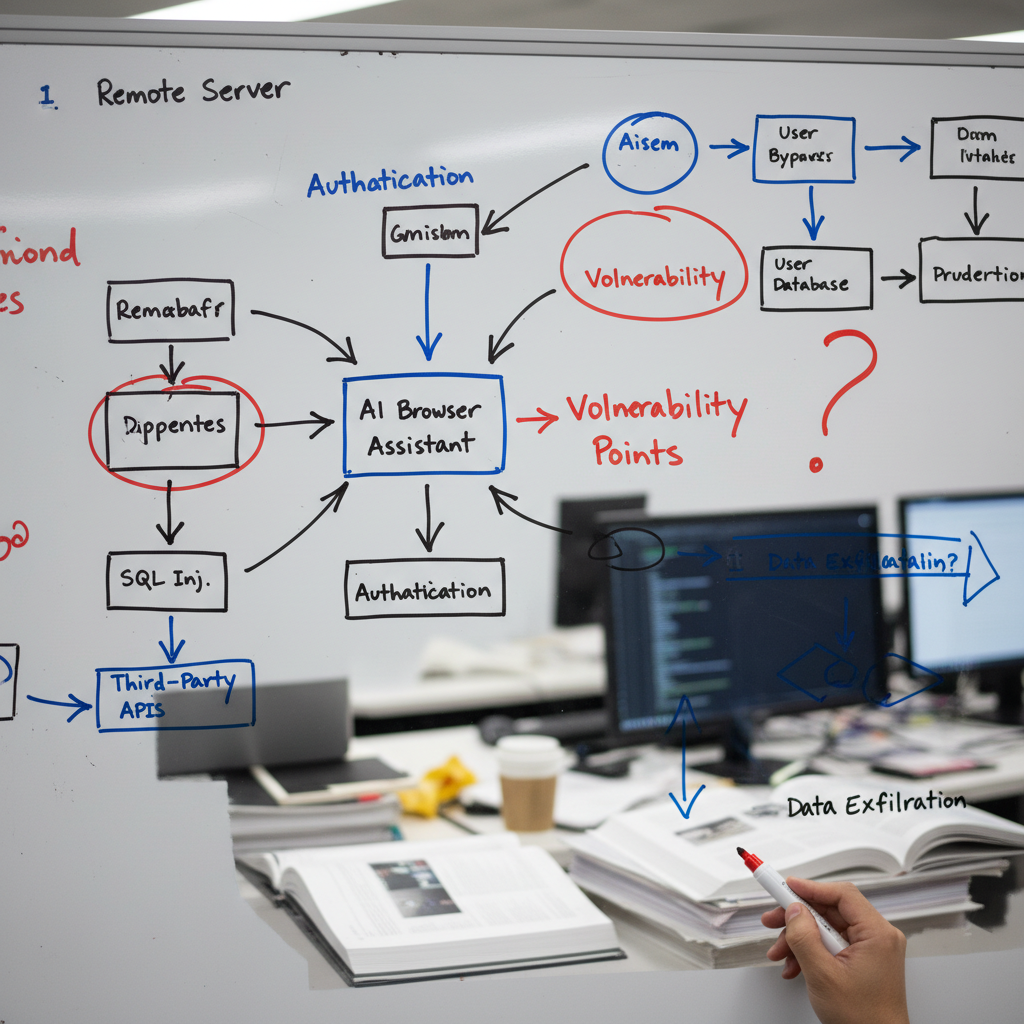

The vulnerabilities identified by security researchers fall into several distinct but interconnected categories. Prompt injection is perhaps the most alarming. This attack type involves malicious instructions being embedded into web content that an AI browser assistant then inadvertently executes, potentially leaking user data, performing unintended actions, or being manipulated into serving misleading information.

Unlike traditional cross-site scripting attacks, prompt injection exploits the very intelligence that makes AI browsers appealing. The AI cannot always distinguish between a legitimate user instruction and a malicious instruction hidden inside a webpage it is summarising or interacting with. That ambiguity is, at its core, an architectural problem , not merely a bug to be patched.

"The fundamental architecture of AI browsers may need a complete rethink." , Security researchers cited in ongoing vulnerability disclosures, 2025

Data leakage represents the second major concern. When an AI assistant processes a user's browsing session , reading page content, summarising documents, suggesting responses , it necessarily handles sensitive information. Researchers have demonstrated scenarios in which this data can be exfiltrated through carefully crafted web content, or inadvertently included in AI model queries transmitted to remote servers.

The third vulnerability category involves agentic browsing behaviour. As AI browsers evolve from passive assistants into active agents , capable of filling forms, executing purchases, and navigating sites on a user's behalf , the attack surface expands dramatically. A compromised AI agent operating with user permissions is, in effect, a compromised user account.

Why Asia-Pacific Users Face Heightened Risk

The risk calculus is particularly sharp in Asia-Pacific. Users in markets like Japan, South Korea, Singapore, and increasingly India and Southeast Asia are among the world's most active adopters of AI productivity tools. Many use AI-enhanced browsers for sensitive tasks including financial management, healthcare research, and interactions with government digital services.

Each of these countries also operates within a strict data protection framework. Japan's Act on the Protection of Personal Information (APPI), South Korea's Personal Information Protection Act (PIPA), and Singapore's Personal Data Protection Act (PDPA) all impose significant obligations on companies that handle personal data , obligations that AI browser vendors may be struggling to meet given the opaque nature of how AI systems process and transmit information.

"Privacy-conscious users in markets like Japan, South Korea, and Singapore face particularly concerning implications given strict data protection regulations in the region." , AIinASIA research briefing, 2025

Regulatory exposure is real. If a confirmed AI browser vulnerability leads to a data breach involving citizens of these jurisdictions, the legal and financial consequences for browser vendors could be substantial. Singapore's PDPA, for instance, allows for fines of up to S$1 million for serious breaches , a figure that regulators have shown increasing willingness to apply.

The Competitive Landscape and the Asian Dimension

The browser market in Asia is not simply a replica of the Western landscape. While Chrome and Edge dominate, a number of Asian-developed browsers have been gaining ground , particularly those integrating local AI models. Chinese tech ecosystem players have embedded AI capabilities into browsers aimed at domestic and international markets, while South Korean and Japanese firms have experimented with AI assistants tailored to local language and cultural context.

The security implications of this fragmentation are significant. Larger vendors like Google and Microsoft have the resources and institutional knowledge to respond to vulnerability disclosures rapidly, even if the pace has been criticised as insufficient. Smaller or newer entrants may lack the same security review infrastructure, creating a patchwork of risk across the region's user base.

For context on how Chinese AI development is reshaping competitive dynamics across the region, the trajectory outlined in China's ambitious five-year AI technology programme is worth understanding , it signals that AI browser development will only accelerate from Beijing, with security considerations potentially secondary to market capture.

The Patch Problem and What Vendors Are Doing

Google, Microsoft, and other browser vendors have acknowledged the existence of AI-related vulnerabilities and are actively working on mitigations. However, security experts argue that patching individual flaws does not address the underlying structural issue: the integration of a probabilistic, instruction-following AI system into a security-sensitive environment was never going to be without consequence.

- Sandboxing AI processing to limit its access to sensitive session data

- Implementing strict content security policies that flag or block potential prompt injection attempts

- Requiring explicit user permission before AI agents take any action on a user's behalf

- Conducting independent third-party security audits of AI browser features before release

- Increasing transparency about what data is transmitted to remote AI servers during a browsing session

These measures are sensible, but experts caution they are incremental responses to what may be a foundational challenge. The question of whether an AI capable enough to be genuinely useful can also be made reliably safe in an adversarial web environment remains open.

This tension mirrors broader concerns about AI tool safety that are already affecting user behaviour. Research into how people actually use AI tools in daily life , covered in depth in our analysis of AI usage realities across Asia in 2025 , suggests that many users operate with a significant trust deficit, particularly around data handling. The browser vulnerability disclosures are unlikely to help.

The Productivity Paradox

There is a deeper irony at play here. AI browsers are marketed primarily as productivity tools. They promise to save time, reduce cognitive load, and make the web more navigable. Yet the security vulnerabilities they introduce create a new form of cognitive burden for users who must now weigh the efficiency gains against genuine privacy and security risks.

This dynamic is explored in detail in our coverage of the cognitive costs of AI-assisted productivity , the point being that the promise of effortless AI assistance frequently comes with hidden costs that are only becoming visible now.

| Vulnerability Type | How It Works | User Impact | Patch Feasibility |

|---|---|---|---|

| Prompt Injection | Malicious instructions embedded in web content executed by AI | Data theft, misleading outputs, unintended actions | Difficult , architectural issue |

| Data Leakage | Sensitive session data exfiltrated via AI query transmissions | Privacy breach, regulatory exposure | Partial , requires sandboxing |

| Agentic Misuse | AI agents manipulated to perform harmful actions with user permissions | Account compromise, financial loss | Requires user consent frameworks |

What Users Can Do Right Now

While vendors work on structural fixes, users are not entirely without recourse. The following practical steps can meaningfully reduce exposure:

- Disable AI features for sensitive browsing sessions, particularly when conducting financial transactions or accessing healthcare or government portals.

- Review browser privacy settings and disable any features that transmit browsing content to remote servers by default.

- Keep browsers updated , vendors are releasing patches as vulnerabilities are confirmed, and unpatched browsers carry the highest risk.

- Be sceptical of AI-generated summaries on sensitive topics, particularly those drawn from third-party web content, which may have been manipulated.

- Consider separate browser profiles for AI-assisted and non-AI browsing, isolating session data where possible.

It is also worth monitoring developments in the broader AI security space. The vulnerabilities in AI browsers are not isolated , they reflect wider structural weaknesses in how AI systems interact with untrusted input, a theme that surfaces repeatedly across AI tooling. Our coverage of the full scope of AI browser security concerns continues to track this story as new disclosures emerge.

Frequently Asked Questions

What is a prompt injection attack in an AI browser?

A prompt injection attack occurs when malicious instructions are hidden within web content , such as a webpage, document, or email , that an AI browser assistant then reads and acts upon. Because the AI cannot reliably distinguish between legitimate user instructions and embedded malicious ones, it may execute harmful commands, leak user data, or produce misleading outputs. It is one of the most difficult AI security vulnerabilities to patch because it exploits the core functionality of the AI system itself.

Are AI browsers safe to use for banking or sensitive tasks in Asia?

Currently, security researchers advise caution. Until AI browser vendors implement robust sandboxing, explicit user consent frameworks for agentic actions, and greater transparency about data transmission, disabling AI features during sensitive browsing sessions is the most prudent approach. Users in Japan, South Korea, and Singapore should also be aware that any data breach involving their personal information could trigger regulatory action under local data protection laws.

Which AI browsers are affected by these vulnerabilities?

The vulnerabilities identified by researchers are largely structural and affect any browser that integrates an AI assistant capable of reading web content, summarising pages, or acting as an agent. This includes Microsoft Edge with Copilot, Google Chrome with AI Overviews, and a range of emerging browsers from Asian technology firms. No single vendor has been identified as uniquely at fault , the issue is inherent to the current design paradigm of AI-integrated browsing.

Given what researchers have now confirmed, how are you managing AI features in your own browser , and do you trust your current setup with sensitive data? Drop your take in the comments below.