Tools

The Truth About OpenAI’s o1: Is It Worth the Hype?

Explore the capabilities and limitations of OpenAI’s o1 model, its impact on the AI community, and its potential role in the future of AI in Asia.

Published

7 months agoon

By

AIinAsia

TL;DR:

- OpenAI’s o1 model excels at complex reasoning but is more expensive and slower than GPT-4o.

- Open AI’s o1 is best suited for big, complicated tasks rather than simpler questions.

- The AI community has mixed feelings about o1’s capabilities and its high cost.

The Arrival of OpenAI’s o1: A Step Forward or Back?

OpenAI recently released its new o1 models, nicknamed “Strawberry,” which pause to “think” before answering. While there’s been much anticipation, the model has received mixed reviews. Compared to GPT-4o, o1 is better at reasoning and complex questions but is roughly four times more expensive. It also lacks the tools, multimodal capabilities, and speed that made GPT-4o impressive. OpenAI even admits that GPT-4o is still the best option for most prompts.

Ravid Shwartz Ziv, an NYU professor studying AI models, shares, “It’s impressive, but I think the improvement is not very significant. It’s better at certain problems, but you don’t have this across-the-board improvement.”

Thinking Through Big Ideas

OpenAI o1 stands out because it breaks down big problems into small steps, attempting to identify when it gets a step right or wrong. This “multi-step reasoning” isn’t new but hasn’t been practical until recently. Kian Katanforoosh, Workera CEO and Stanford adjunct lecturer, explains, “If you can train a reinforcement learning algorithm paired with some of the language model techniques that OpenAI has, you can technically create step-by-step thinking and allow the AI model to walk backwards from big ideas you’re trying to work through.”

However, o1 is pricey. It charges for “reasoning tokens,” which are the small steps the model breaks big problems into. This makes it crucial to use o1 wisely to avoid high costs.

OpenAI o1 in Action

To test o1, I asked ChatGPT o1 preview to help plan Thanksgiving dinner for 11 people. After 12 seconds of “thinking,” it provided a detailed response, breaking down its thinking at each step. It suggested prioritizing oven space and even proposed renting a portable oven. While it performed better than GPT-4o, it also suggested overwhelming solutions for simpler tasks.

For instance, when asked where to find cedar trees in America, o1 delivered an 800+ word response, outlining every variation of cedar tree. GPT-4o provided a concise, three-sentence answer.

Tempering Expectations

The hype around o1 started in November 2023, leading some to speculate that it was a form of AGI. However, OpenAI CEO Sam Altman clarified that o1 is not AGI and is still flawed and limited. The AI community is coming to terms with a less exciting launch than expected.

Rohan Pandey, a research engineer with AI startup ReWorkd, notes, “The hype sort of grew out of OpenAI’s control.” Mike Conover, Brightwave CEO, adds, “Everybody is waiting for a step function change for capabilities, and it is unclear that this represents that.”

The Value of OpenAI o1

The principles behind o1 date back years. Google used similar techniques in 2016 to create AlphaGo. Andy Harrison, former Googler and CEO of the venture firm S32, points out that this brings up an age-old debate in the AI world. One camp believes in automating workflows through an agentic process, while the other thinks generalized intelligence and reasoning would eliminate the need for workflows.

Katanforoosh sees o1 as a tool to question your thinking on big decisions. For example, it can help assess a data scientist’s skills in a 30-minute interview. However, the question remains whether this helpful tool is worth the hefty price tag.

The Future of AI in Asia

The release of o1 raises questions about the future of AI, particularly in Asia. As AI models become more capable, they also become more expensive. The trade-off between cost and capability will shape how AI is adopted and used in the region.

Comment and Share:

What are your thoughts on OpenAI’s o1 model? Have you tried it yet? Share your experiences and thoughts on the future of AI and AGI in the comments below. Don’t forget to subscribe for updates on AI and AGI developments.

You may also like:

- The View From Koo: Prepare for the AI Age with Your Family

- AI Takes on Math: Google’s Breakthroughs in Reasoning

- 10 Amazing GPT-4o Use Cases

- To learn more about OpenAi’s o1 tap here.

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

You may like

-

Can PwC’s new Agent OS Really Make AI Workflows 10x Faster?

-

OpenAI’s New ChatGPT Image Policy: Is AI Moderation Becoming Too Lax?

-

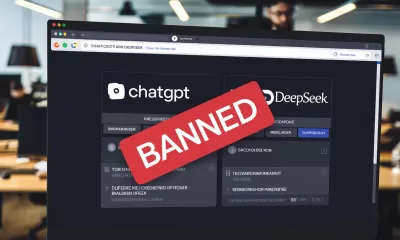

DeepSeek Dilemma: AI Ambitions Collide with South Korean Privacy Safeguards

-

Reality Check: The Surprising Relationship Between AI and Human Perception

-

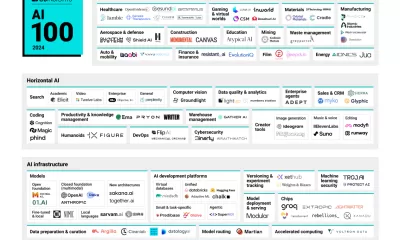

AI 100: The Hottest AI Startups of 2024 – Who’s In, Who’s Winning, and What’s Next for Asia?

-

Bot Bans? India’s Bold Move Against ChatGPT and DeepSeek

Tools

Can AI Videos Really Boost Your Brand’s Authenticity?

AI-generated videos are reshaping brand authenticity and trust among audiences. Discover actionable insights: 2025 AI Sentiment Report.

Published

2 weeks agoon

April 8, 2025By

AIinAsia

TL;DR – What You Need to Know in 30 Seconds

- Consumers overwhelmingly accept AI-generated videos, seeing them as innovative and authentic.

- Brands must transparently disclose their use of AI to enhance trust.

- High-quality, personalised content blending AI with human creativity is critical for engagement.

How Brands In Asia Can Navigate Trust, Transparency, and Creativity Through AI-generated Video Content

Picture this: your favourite brand releases an amazing video campaign that grabs your attention, moves you, and makes you want to share it instantly. Now imagine learning that this compelling video wasn’t crafted by humans alone—it was produced using AI. Would your trust in that brand suddenly plummet, or would you see it as innovative?

The answer might surprise you. A recent global survey of 2,385 consumers has shown a fascinating shift: the majority not only accept but actively embrace AI-generated videos.

Let’s dig into what this means for brands across Asia, and how you can harness AI video to boost creativity, authenticity, and consumer trust.

The AI video landscape: a quick snapshot

Video has become the lifeblood of digital communication. In 2023 alone, over three billion people worldwide engaged with video content, solidifying its role as the content king. Today, 86% of businesses rely on video marketing, and the data tells us why: 90% of consumers confirm that videos significantly influence their purchasing decisions.

As the appetite for engaging, relevant video content grows, brands face the challenge of creating high-quality videos swiftly and affordably. Enter AI-generated videos—a game-changer that promises efficiency, creativity, and scale. But is the audience on board?

Seven essential insights brands need to know from the 2025 AI Sentiment Report:

1. Audiences are comfortable with AI-generated videos

- 90.9% have no issue with brands using AI-generated videos.

- Words like “innovative,” “modern,” and “efficient” regularly cropped up, reflecting a positive shift in consumer sentiment.

2. AI enhances authenticity and creativity (yes, really!)

- 89.1% said AI doesn’t negatively impact brand perception.

- 62.8% agree AI-generated videos enhance brand creativity and storytelling.

- Smaller brands now have a chance to compete creatively with big-budget campaigns, levelling the playing field significantly.

3. Transparency isn’t optional

- 75.6% consider transparency about AI usage essential.

- Audiences prefer clear labels/icons (59.4%), descriptions (25.5%), or social hashtags (13.7%) to indicate AI-generated content.

4. Human creativity still matters—a lot

- A striking 85.5% of respondents react positively when human involvement complements AI-produced videos.

- Brands that blend AI efficiency with human touch achieve stronger engagement and mitigate authenticity concerns.

5. Quality directly impacts trust

- Over half (54.2%) say high-quality AI videos increase brand trust.

- Conversely, poor-quality videos quickly erode consumer confidence (10.3% lose trust).

6. Social media leads, but don’t neglect other platforms

- Social media tops the list (69.4%) for consumer comfort with AI-generated videos.

- Tutorials (31.1%), product demos (30.4%), and even customer support (27.3%) show AI video’s remarkable versatility.

7. Personalised content drives deeper engagement

- 52.2% engage more with high-quality videos, while 25.1% prefer personalised content.

- This highlights the need for strategic targeting and tailored storytelling for Asian consumers, who value relevance highly.

Actionable roadmap for Asian brands: combining AI with authenticity

The path forward is clear for Asian brands aiming to harness AI-generated video effectively:

- Prioritise transparency: Clearly label AI-generated content using recognisable icons or descriptions. Consumers value openness—so shout it loud and clear.

- Invest in quality: Use advanced AI video tools to produce professional, lifelike, and compelling content. Your audience expects—and rewards—excellence.

- Blend AI and human creativity: Don’t rely on AI alone. Human storytelling enriches AI-generated content, creating videos that resonate emotionally with viewers.

- Choose your channels wisely: Deploy AI videos strategically on social media, tutorials, product demos, and customer interactions. Meet your audience where they feel comfortable engaging.

- Personalise, personalise, personalise: Craft targeted messages that reflect your audience’s interests, values, and cultural contexts. Especially in diverse Asian markets, personalised content ensures deeper connections.

Final thoughts: Is AI the future of video marketing in Asia?

Absolutely. AI video isn’t just a flashy tech trend—it’s rapidly becoming essential to effective digital storytelling. Asian brands have an exciting opportunity to lead in this area by balancing innovation with transparency and authenticity.

As AI continues to evolve, expect more immersive, interactive experiences that redefine consumer engagement. Embrace this evolution now, and you’ll set your brand up not just to meet—but exceed—consumer expectations.

Ready to explore more on AI’s growing role in shaping consumer sentiment in 2025?

Keep following AIinASIA for deeper dives and practical insights.

You may also like:

- You can download the full report for free by tapping here

- Unveiling the Dark Side of AI: The Transparency Dilemma in the AI Market

- Unleash Your Inner Artist with Google Gemini’s Free AI Image Generator

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

Tools

The Secret to Using Generative AI Effectively In 2025

Struggling with genAI? You might be prompting it wrong. Discover the key to getting meaningful results from ChatGPT and other generative AI tools in 2025.

Published

3 weeks agoon

March 31, 2025By

AIinAsia

TL;DR – What You Need to Know in 30 Seconds

- To get better genAI results, externalise your internal dialogue. This is key for using generative AI effectively.

- Don’t prompt like it’s 2023—ramble with detail for more effective use.

- Treat genAI as a brainstorming partner, not a search engine. Knowing how you’re using generative AI effectively will make a big difference.

Think GenAI Sucks? You’re Probably Using It Wrong.

Do you secretly (or perhaps openly) think generative AI (genAI) sucks? For a while so did I. But using generative AI effectively can change your perception.

Frankly, the early hype around genAI was painful. Remember when ChatGPT exploded in early 2023, and everyone rushed to cram it into their businesses? Those were rough times. Companies, convinced they could instantly replace human talent, laid off skilled workers only to realise later their shiny new AI toys weren’t up to scratch.

Fast-forward to 2025, and the good news is: genAI is finally useful. But there’s a catch—you have to rethink how to use it.

The Surprising Secret: Externalising Your Inner Dialogue

Here’s the thing: genAI doesn’t thrive on short, neat prompts like traditional Google searches. It needs your messy thoughts. In other words, you need to ramble. Learning how to ramble effectively is a part of using generative AI effectively.

Imagine you’re trying to recall that elusive word, the one that perfectly captures a feeling. In Google, you’d agonise over the perfect keyword combo. But with ChatGPT? Just pour out your thoughts:

“What’s the word for a soft feeling that’s warm, but a bit cold? It’s a bit sad, but not quite… you miss something, but you’re glad you miss it. Like walking home from school on a sunny autumn day, knowing winter’s coming and summer’s gone—but you’re happy it happened.”

ChatGPT will understand you’re reaching for “wistful,” or at least point you to it. This kind of rambling, stream-of-consciousness prompting unlocks genAI’s best results.

Why Rambling Works Better Than You Think

To really see genAI shine, do this experiment:

- Open ChatGPT on your phone.

- Tap the microphone button (the one next to the chat box—not the voice chat mode).

- Ramble freely about whatever you’re looking for. Searching for a TV show? Talk about what you like, dislike, your recent binges, random stuff—yes, really random stuff.

- Let your thoughts flow for a minute or two, then hit send without editing. Let the typos, ums, and randomness stay. This method is part of using generative AI effectively.

You’ll get back a response that’s surprisingly on-point. Even better, it’ll suggest avenues you hadn’t considered. Your initial messy prompt becomes a rich context for further exploration.

Back-and-forth Is Your Friend

Say you want the perfect marketing tagline. Start broad, then iterate:

- Get rough ideas first.

- Narrow down based on your reactions.

- Keep steering the conversation with more detailed feedback. This iterative process is crucial for using generative AI effectively.

“I like the third idea, but make it punchier. Number six feels too formal.”

Crucially, you’re still in charge. The AI provides ideas; you provide the judgement. If it strays, simply say, “Not quite right—it’s for a major clothing brand, not a tech startup. Keep it professional but catchy.” The more specific you are, the better it adapts.

AI Productivity Tips: It’s About Co-discovery, Not Automated Decision-making

Think of genAI as your brainstorming partner, not an answer machine. It’s there to surface ideas, connections, and concepts, but you’re still calling the shots. You’re still the one deciding what’s worth pursuing.

Yes, genAI will sometimes hallucinate or miss the mark. That’s fine—just redirect it. Tell it exactly why it’s off-track. The beauty of genAI is its adaptability, not its perfection. Using generative AI effectively involves understanding its limitations and guiding it accordingly.

The Bigger AI Picture: Companies Have Been Selling It Wrong

If you’re sceptical of genAI, that makes sense. The industry’s been pitching it incorrectly, presenting it as a magical decision-maker. No wonder smart people roll their eyes.

GenAI isn’t a replacement for your brain—it’s a tool to extend it. You might still brainstorm traditionally, browse Google, or take long walks to think. Perfect. Generative AI is just another way to spark those insights faster. Ultimately, success depends on using generative AI effectively.

What do YOU think?

Are you ready to stop treating genAI like a vending machine and start chatting with it like a friend?

You may also like:

- GenAI in Asia: 5 Steps to Embrace the Future and Mitigate Risks

- AI Revolution: How AI Creates Equal Learning and Job Opportunities

- Where Can Generative AI Be Used to Drive Strategic Growth?

- Tap here to try this approach on the free version of ChatGTP.

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

News

Adobe Jumps into AI Video: Exploring Firefly’s New Video Generator

Explore Adobe Firefly Video Generator for safe, AI-driven video creation from text or images, plus easy integration and flexible subscription plans

Published

1 month agoon

March 18, 2025By

AIinAsia

TL;DR – What You Need to Know in 30 Seconds

- Adobe Has Launched a New AI Video Generator: Firefly Video (beta) is now live for anyone who’s signed up for early access, promising safe and licensed content.

- Commercially Safe Creations: The video model is trained only on licensed and public domain content, reducing the headache of potential copyright issues.

- Flexible Usage: You can create 5-second, 1080p clips from text prompts or reference images, add extra effects, and blend seamlessly with Adobe’s other tools.

- Subscription Plans: Ranging from 10 USD to 30 USD per month, you’ll get a certain number of monthly generative credits to play with, along with free cloud storage.

So, What is the Adobe Firefly Video Generator?

If you’ve been keeping an eye on the AI scene, you’ll know it’s bursting with new tools left, right, and centre. But guess who has finally decided to join the party, fashionably late but oh-so-fancy? That’s right — Adobe! The creative software giant has just unveiled its generative AI video tool, Firefly Video Generator. Today, we’re taking a closer look at what it does, why it matters, and whether it’s worth your time.

If you’ve heard whispers about Adobe’s foray into AI, it’s all about Firefly — their suite of AI-driven creative tools. Adobe has now extended Firefly to video, letting you turn text or images into short video clips. At the moment, each clip is around five seconds long in 1080p resolution and spits out an MP4 file.

We’ve got great news — Generate Video (beta) is now available. Powered by the Adobe Firefly Video Model, Generate Video (beta) lets you generate new, commercially safe video clips with the ease of creative AI.

The unique selling point is that Firefly’s videos are trained on licensed and public domain materials, so you can rest easy about copyright concerns. Whether you’re a content creator, a social media guru, or just love dabbling in AI, this tool might be your new favourite playground.

Getting Started: Text-to-Video in a Flash

Interested? Here’s the easiest way in:

- Sign In: Head over to firefly.adobe.com and log in or sign up for an Adobe account.

- Select “Text to Video”: Once logged in, you’ll see a selection of AI tools under the Featured tab. Pick “Text to Video,” and you’re in!

- Craft a Prompt: Type out a description of what you want to see. For best results, Adobe recommends specifying the shot type, character, action, location, and aesthetic — the more detail, the better — up to 175 words.. For example:

Prompt: A futuristic cityscape at sunset with neon lights reflecting off wet pavement. The camera pans over a sleek, silver skyscraper, then zooms in on a group of drones flying in formation, their lights pulsating in sync with the city’s rhythm. The scene transitions to a close-up of a holographic advertisement displaying vibrant, swirling patterns. The video ends with a wide shot of the city, capturing the dynamic interplay of light and technology.

- Generate: Hit that generate button, and watch Firefly do its magic. Stick around on the tab while it’s generating, or else your progress disappears (a bit of a quirk if you ask me).

The end result is a 5-second video clip in MP4 format, complete with 1920 × 1080 resolution. You can’t exactly produce a Hollywood blockbuster here, but for quick, creative clips, it’s pretty handy.

Here’s another one:

A cheerful, pastel-colored cartoon rabbit wearing a pair of oversized sunglasses and a Hawaiian shirt. The rabbit is standing on a sunny beach, surrounded by palm trees and colorful beach balls. As it dances to upbeat music, it starts to juggle three beach balls while spinning around. The camera zooms out to show the rabbit’s shadow growing larger, transforming into a giant beach ball that bounces across the sand. The video ends with the rabbit laughing and winking at the camera.

Image-to-Video: Turn That Pic into Motion

To use this feature, you must have the rights to any third-party images you upload. All images uploaded or content generated must meet our User Guidelines. Access will be revoked for any violation.

If you prefer a visual reference to a text prompt, Firefly also has your back. You can upload an image — presumably one you own the rights to — and let the AI interpret that into video form. As Adobe warns:

Once uploaded, you can tweak the ratio, camera angle, motion, and more to shape your final clip. This is a brilliant feature if you’re working on something that requires a specific style or visual element and you’d like to keep that vibe across different shots.

A Dash of Sparkle: Adding Effects

A neat trick up Adobe’s sleeve is the ability to layer special effects like fire, smoke, dust particles, or water over your footage. The model can generate these elements against a black or green screen, so you can easily apply them as overlays in Premiere Pro or After Effects.

In practical terms, you could generate smoky overlays to give your scene a dramatic flair or sprinkling dust particles for a cinematic vibe. Adobe claims these overlays blend nicely with real-world footage, so that’s a plus for those who want to incorporate subtle special effects into their videos without shelling out for expensive stock footage.

How Much Does Adobe Firefly Cost?

There are two main plans if you decide to adopt Firefly into your daily workflow:

- Adobe Firefly Standard (10 USD/month)

- You get 2,000 monthly generative credits for video and audio, which means you can generate up to 20 five-second videos and translate up to 6 minutes of audio and video.

- Useful for quick clip creation, background experimentation, and playing with different styles in features like Text to Image and Generative Fill.

- Adobe Firefly Pro (30 USD/month)

- This plan offers 7,000 monthly generative credits for video and audio, allowing you to generate up to 70 five-second videos and translate up to 23 minutes of audio and video.

- Great for those looking to storyboard entire projects, produce b-roll, and match audio cues for more complex productions.

Both plans also include 100 GB of cloud storage, so you don’t have to worry too much about hoarding space on your own system. They come in monthly or annual prepaid options, and you can cancel anytime without fees — quite flexible, which is nice.

First Impressions: Late to the Party?

Overall, Firefly’s biggest plus is its library of training data. Because it only uses Adobe-licensed or public domain content, creators can produce videos without fear of accidental infringement. This is a big deal, considering how many generative AI tools out there scrape the web, causing all sorts of copyright drama.

Adobe’s integration with its existing ecosystem is another big draw. If you’re already knee-deep in Premiere Pro and After Effects, having a built-in system for AI-generated overlays, quick b-roll clips, and atmospheric effects might streamline your workflow.

But let’s be honest: the AI video space is already pretty jam-packed. Competitors like Runway, Kling, and Sora from OpenAI have been around for a while, offering equally interesting features. So the question is, does Firefly do anything better or more reliably than the rest? You’ll have to try it out for yourself (and please let us know your thoughts in the comments below).

This sentiment might ring true until Adobe packs in some advanced features or speeds up its render times. However, you can’t knock it until you’ve tried it. Adobe does offer free video generation credits, so have a go. Generate your own videos, add flaming overlays, and see if the results vibe with your style.

Will Adobe’s trusted brand name and integrated workflow features push Firefly Video Generator to the top of the AI video world? Or is this too little, too late?

Ultimately, you’re the judge. The AI video revolution is in full swing, and each platform has its own perks and quirks.

Wrapping Up & Parting Thoughts

Adobe’s Firefly Video Generator is an exciting new player that’s sure to turn heads. If you’re already an Adobe devotee, it makes sense to give it a whirl and see how seamlessly it slides into your existing workflow. You’ll enjoy its straightforward interface, the security of licensed content, and some neat editing options.

But with so many alternatives on the market, is Firefly truly innovative, or just the next step in AI’s unstoppable march through our creative spaces?

Could Adobe’s pedigree and safe licensing edge truly redefine AI video for commercial use, or is the industry already oversaturated with better and bolder solutions?

You may also like:

- Revolutionising the Creative Scene: Adobe’s AI Video Tools Challenge Tech Giants

- Adobe’s GenAI is Revolutionising Music Creation

- Try out the Adobe Firefly Video Generator for free by tapping here

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

AI Career Guide: Land Your Next Job with Our AI Playbook

Will AI Take Your Job—or Supercharge Your Career?

Can AI Videos Really Boost Your Brand’s Authenticity?

Trending

-

Life2 weeks ago

Life2 weeks agoWhich Jobs Will AI Kill by 2030? New WEF Report Reveals All

-

Life2 weeks ago

Life2 weeks agoAI Career Guide: Land Your Next Job with Our AI Playbook

-

Business2 weeks ago

Business2 weeks agoWill AI Take Your Job—or Supercharge Your Career?

-

Marketing3 weeks ago

Marketing3 weeks agoWill AI Kill Your Marketing Job by 2030?

-

Tools2 weeks ago

Tools2 weeks agoCan AI Videos Really Boost Your Brand’s Authenticity?

-

Business2 weeks ago

Business2 weeks agoThe Three AI Markets Shaping Asia’s Future

-

Business3 weeks ago

Business3 weeks agoEmbrace AI or Face Replacement—Grab CEO Anthony Tan’s Stark Warning

-

Life3 weeks ago

Life3 weeks agoWould You Trust Tesla’s Grok AI More Than Your Friends?