South Korea's $850 Million AI Textbook Experiment Crashes Within Four Months

When South Korea launched its ambitious AI Digital Textbook Promotion Plan in March 2025, officials promised personalised learning, reduced teacher workloads, and lower dropout rates. Eight months later, the initiative lies in tatters, serving as a cautionary tale about rushing unproven technology into classrooms without proper preparation.

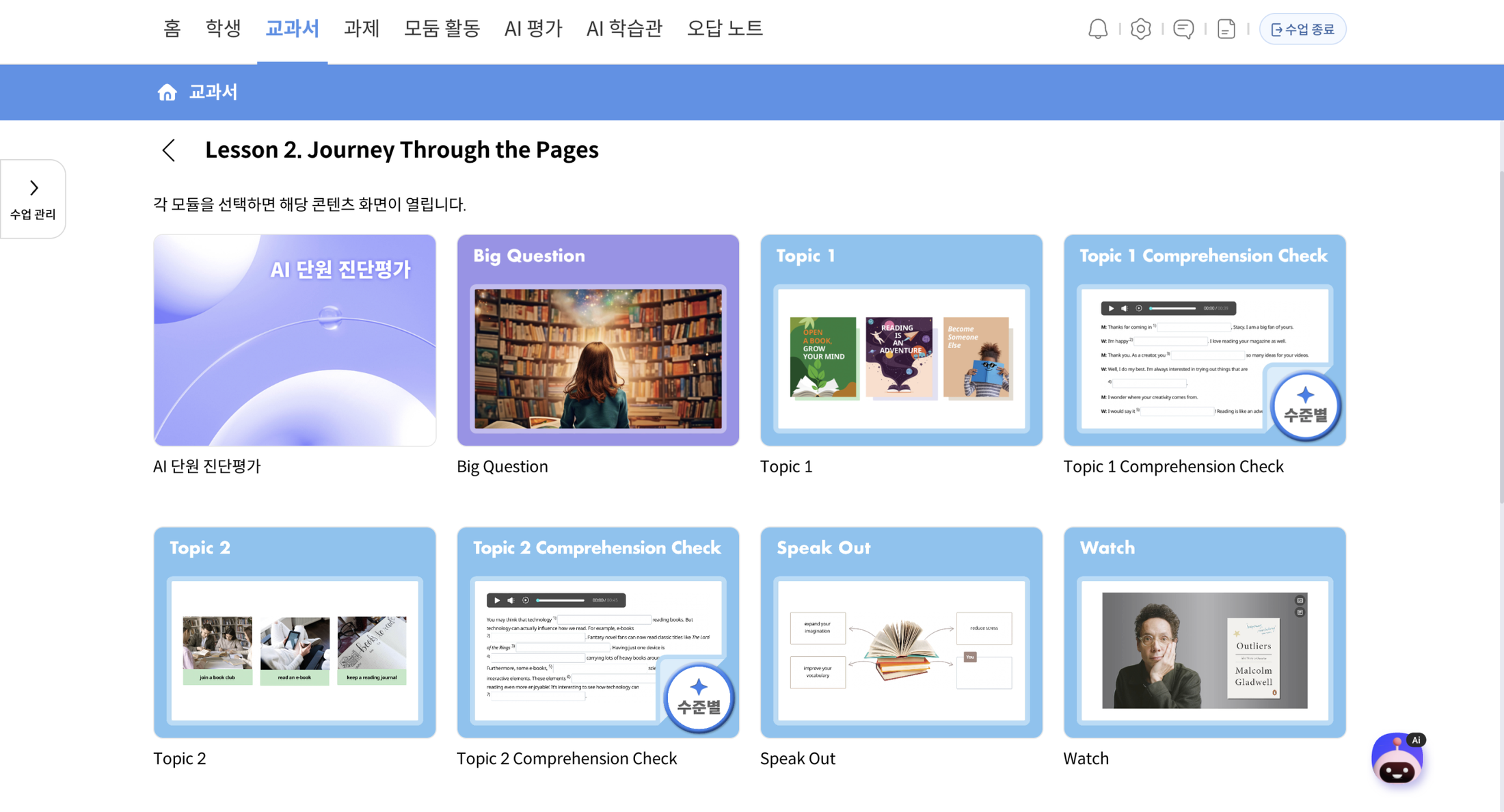

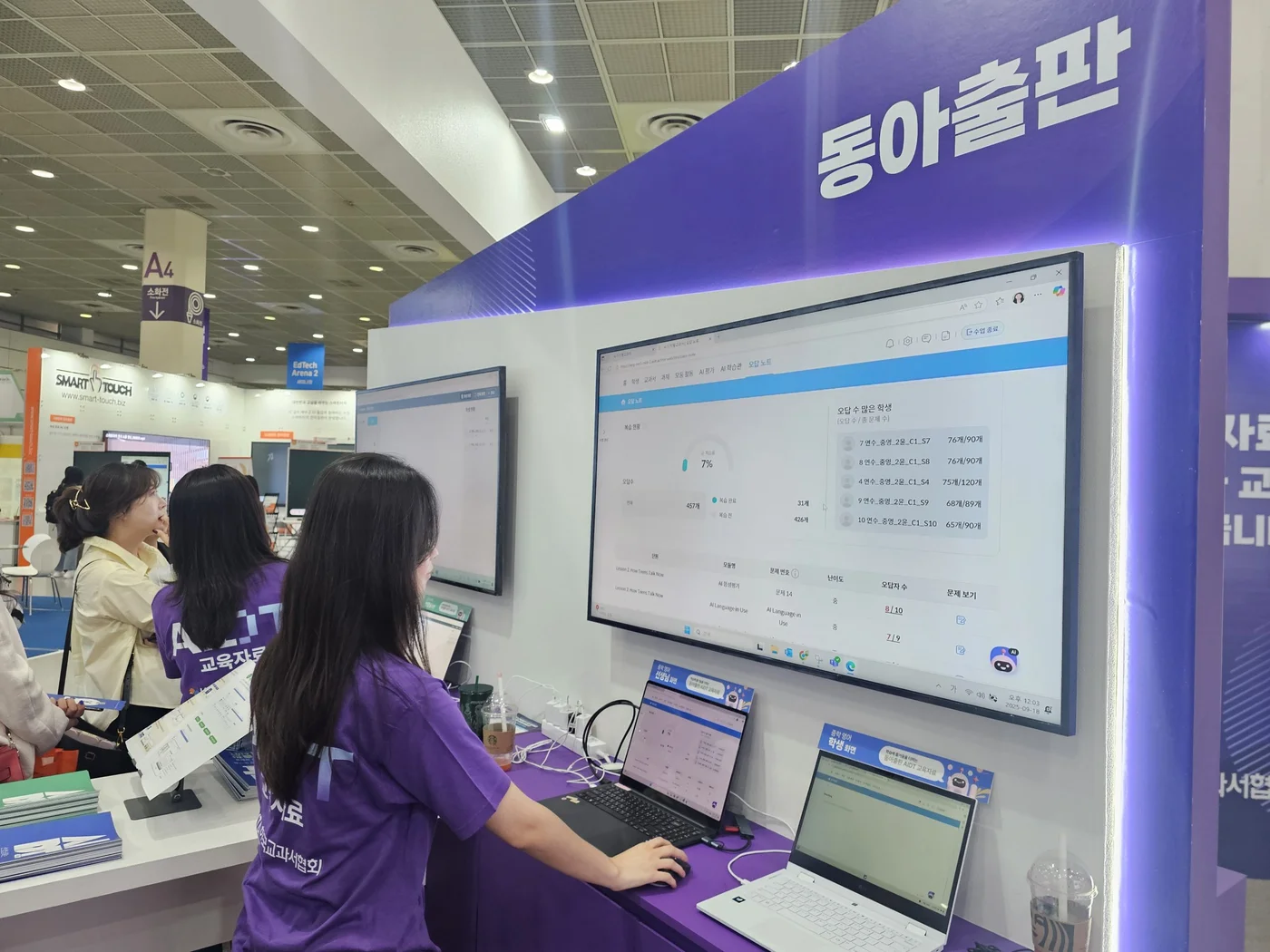

The government's grand vision involved rolling out 76 AI-powered textbooks across mathematics, English, and coding subjects to thousands of schools. Former President Yoon Suk Yeol championed the project, partnering with a dozen publishing companies in what seemed like South Korea's next leap forward in educational innovation.

Instead of streamlining education, the textbooks created chaos. Technical glitches delayed classes, errors riddled the content, and teachers found themselves spending more time troubleshooting than teaching.

Technical Failures and Quality Control Issues Plague Rollout

The problems started immediately. Students and teachers reported widespread technical failures that brought lessons to a grinding halt. Ko Ho-dam, a junior at a Jeju Island high school, described the frustration:

All our classes were delayed because of technical problems with the textbooks. I also didn't know how to use them well.

Teachers found the quality unacceptably poor. A high school mathematics instructor explained the fundamental issues:

Monitoring students' learning progress with the books in class was challenging. The overall quality was poor, and it was clear it had been hastily put together.

The AI-powered features that were supposed to personalise learning often malfunctioned, whilst factual errors throughout the content undermined their educational value. This mirrors broader challenges we've seen with AI implementation across Asia's education sector, where rushed deployment often leads to disappointing results.

Government promises of faster publishing through AI proved equally hollow, with at least one publisher experiencing significant delays rather than improved efficiency.

By The Numbers

- Total project cost: 1.2 trillion won ($850 million) before collapse after four months

- Participation drop: From 37% of schools in first semester to just 19% after reclassification

- Elementary school usage rates: Only 28.6-29.1% for English and mathematics by March 2025

- Teacher training gaps: 98.5% of 2,626 surveyed teachers reported insufficient AI textbook training

- School opt-outs: Over half of 4,095 participating schools abandoned the programme by mid-October

From Mandatory Mandate to Optional Afterthought

The programme's trajectory from triumphant launch to quiet burial reveals much about South Korea's approach to educational technology. Education Minister Lee Joo-ho initially declared the textbooks legally mandatory, but fierce public backlash forced a rapid retreat to voluntary pilot status for just one academic year.

By August 2025, following President Yoon's impeachment and political shifts, lawmakers formally revoked the mandatory requirement. The writing was already on the wall: adoption rates varied dramatically by political geography, with conservative Daegu maintaining 98% usage whilst liberal Sejong dropped to just 8%.

October brought the final blow. Mounting complaints forced officials to quietly reclassify the textbooks as "supplemental materials," giving schools permission to abandon them entirely. The move provided face-saving cover for what was clearly a failed experiment.

| Timeline | Status | Usage Rate | Key Development |

|---|---|---|---|

| March 2025 | Mandatory Launch | 37% | Nationwide rollout begins |

| August 2025 | Policy Reversal | 25% | Mandatory status revoked |

| October 2025 | Supplemental Only | 19% | Schools free to opt out |

| December 2025 | Effective Abandonment | 15% | Widespread discontinuation |

Publishers Face Financial Ruin Amid Programme Collapse

Whilst students and teachers breathed sighs of relief, the publishing companies involved faced potential ruin. Having invested $567 million of the government's $850 million commitment, they suddenly found their products relegated to optional status with plummeting demand.

The industry response has been swift and desperate. Publishers formed the "AI Textbook Emergency Response Committee" and filed a constitutional petition demanding the government reverse its decision. Their argument centres on survival: the reclassification threatens their very existence after massive investments in AI-powered content development.

The situation reflects broader concerns about South Korea's aggressive AI commercialisation efforts, where rapid deployment often prioritises speed over quality. Unlike more measured approaches seen in Singapore's methodical innovation strategies, South Korea's rush to market has created significant casualties.

This textbook debacle joins a growing list of cautionary tales about premature AI deployment. Consider key lessons from this failure:

- Insufficient testing periods led to widespread technical failures in live classroom environments

- Poor quality control resulted in factual errors that undermined educational credibility

- Inadequate teacher training left 98.5% of educators unprepared for AI-powered tools

- Political backing alone cannot overcome fundamental product deficiencies

- Rush-to-market strategies risk massive financial losses for private sector partners

Global Implications for AI in Education

South Korea's textbook disaster offers critical lessons for other nations considering similar AI-driven educational reforms. The failure highlights how enterprise AI pilots across Asia often struggle to reach production, particularly when deployment timelines prioritise political announcements over technical readiness.

The contrast with successful AI integration elsewhere is stark. Singapore and Korea's recent $300 million AI alliance emphasises careful collaboration and measured progress, suggesting South Korea has learned from this expensive mistake.

Regional competitors are taking note. Whilst South Korea's textbook experiment collapsed, other Asian nations have adopted more cautious approaches to educational AI, focusing on pilot programmes and extensive teacher training before any large-scale deployment.

What caused South Korea's AI textbook programme to fail so quickly?

Technical glitches, factual errors, inadequate teacher training, and poor quality control created classroom chaos. The rushed deployment prioritised political timelines over product readiness, leading to widespread dysfunction.

How much money was lost in the failed initiative?

The government committed $850 million total, with publishers investing $567 million before the programme's collapse after just four months of operation.

What happened to schools that adopted the AI textbooks?

Over half of 4,095 participating schools opted out by October 2025. Usage rates dropped from 37% to 19% as the programme shifted from mandatory to optional status.

Are other countries planning similar AI textbook programmes?

Most Asian nations have adopted more cautious approaches after observing South Korea's failure, emphasising pilot testing and teacher training over rapid nationwide deployment.

What lessons does this offer for future educational AI projects?

Successful AI integration requires extensive testing, proper teacher training, robust quality control, and realistic timelines rather than political pressure for rapid deployment.

The collapse of South Korea's AI textbook initiative serves as a stark reminder that technological innovation without proper preparation leads to expensive failures. As other Asian nations watch and learn from this debacle, the question remains: will they heed the lessons of South Korea's rushed experiment, or repeat similar mistakes in their own educational AI ventures? Drop your take in the comments below.