News

Microsoft & Perplexity Give DeepSeek Their Stamp of Approval

Discover how DeepSeek R1, a Chinese open-source model, is integrated by Microsoft and Perplexity despite censorship and data privacy concerns.

Published

2 months agoon

By

AIinAsia

TL;DR – What You Need to Know in 30 Seconds

- Microsoft (via Azure AI Foundry and GitHub) and Perplexity (for Pro subscribers).

- Concerns remain over data privacy, with Wiz discovering a vulnerability exposing over a million records.

- Censorship fears plague DeepSeek’s official version, but Perplexity claims to have bypassed those issues.

- Microsoft asserts that its evaluation process ensures a “secure, compliant” environment for enterprise users, while OpenAI accuses DeepSeek of training on its models.

- The model is cheap, powerful, and quickly gaining popularity—raising questions about broader implications for AI innovation and geopolitics.

DeepSeek R1 in Microsoft & Perplexity Integrations

Hello, dear readers of AIinASIA! Buckle up because today’s story is a whirlwind of controversy, tech breakthroughs, and a dash of political intrigue. If you’ve ever wondered about the future of Chinese-developed AI models on the global stage, you’re in for a treat. In today’s article, we’re talking about a certain trailblazing model called DeepSeek R1—and how Microsoft and Perplexity are making waves by integrating it into their platforms, despite the uproar surrounding data privacy and censorship concerns.

Microsoft & Perplexity Give DeepSeek Their Stamp of Approval

Let’s dive in: DeepSeek R1—fresh off the press just 10 days ago—has already found a home with some American tech powerhouses. Both Microsoft and Perplexity have integrated DeepSeek’s model into their offerings. On Microsoft’s side, you can find R1 on Azure AI Foundry (for subscribers) and on GitHub (free to access). Perplexity, meanwhile, is providing DeepSeek R1 to its Pro subscribers (currently going for $20/month), putting it alongside other AI heavy-hitters like OpenAI’s GPT-o1 and Anthropic’s Claude-3.5.

But hang on—this isn’t just a simple case of “Chinese model meets US platforms.” DeepSeek had plenty of privacy and censorship red flags raised against it. So, how did these American companies feel confident enough to go ahead with it?

Well, according to Microsoft, the company conducted “rigorous red teaming and safety evaluations.” Its official stance is that by accessing DeepSeek R1 through Microsoft services, users get a “secure, compliant, and responsible environment for enterprises.” In other words, Microsoft is telling the world, “No worries—our version is safer!”

Perplexity echoes that sentiment, boldly claiming its version of DeepSeek isn’t censored by the Chinese government. In fact, Perplexity CEO Aravind Srinivas even took a jab at the default DeepSeek chatbot, pointing out how it refused to discuss topics like the Tiananmen Square Massacre or the treatment of Uyghurs. And if you tried talking to it about Taiwan—the responses suspiciously sounded like they came straight from Chinese Communist Party (CCP) talking points. Perplexity says it has completely dodged those issues by tweaking the model to keep it “off-script.”

Data Privacy Snags & Vulnerabilities

Just when we thought things were cruising along nicely, a security research firm called Wiz dropped a bombshell. It discovered a DeepSeek data vulnerability exposing over a million records—ranging from sensitive data to chat logs—publicly on the web. Talk about a privacy nightmare!

Wiz disclosed the problem to DeepSeek, which then patched the flaw. But another data-collection concern remains: If you chat on the official chat.deepseek.com website, there’s every chance the Chinese government could lay its hands on your data. For many, that’s simply a risk not worth taking.

Thankfully, not everyone is using DeepSeek in a way that might send your info across the ocean. According to Endor Labs, an open-source security company, hosting the model yourself (such as grabbing it on Hugging Face) doesn’t pose the same kind of data-sharing risk. Microsoft is even hinting that a “distilled” version of DeepSeek could soon be coming to Copilot+ PCs for local usage—promising better speed, privacy, and efficiency.

OpenAI’s Not Happy

Here’s the spicy part: OpenAI has accused DeepSeek of secretly training on its own models. Although OpenAI hasn’t gone into detail, former US “AI Czar” David Sacks told Fox News there’s “substantial evidence” to back up that claim. Critics, however, have pointed fingers at OpenAI’s own data-gathering practices, saying it, too, has used “stolen IP.” What’s that saying about people in glass houses?

Whatever the truth, you can see why OpenAI might not be thrilled about DeepSeek’s rising popularity. If a brand-new model from a competitor can match or outperform established giants, it puts pressure on the entire ecosystem to innovate faster.

So, What’s Next?

DeepSeek R1 doesn’t look like it’s going away anytime soon—especially now that big names like Microsoft and Perplexity have legitimised it. We can likely expect this model to spread even further across the AI landscape, given its blend of low cost, high performance, and the possibility of skipping that pesky censorship if you use the right channel.

Yes, there are still caveats—data vulnerabilities and political entanglements are serious considerations. But for many AI enthusiasts and developers, the allure of accessing a powerful reasoning model at a fraction of typical costs is too hard to resist. Who wouldn’t want something that can reportedly “think out loud like an intelligent person” and process “hundreds of sources” in one go?

What Do YOU Think?

Are we too quick to embrace powerful AI models from abroad—and in doing so, are we opening the door to government-level surveillance and censorship creeping into the global tech ecosystem?

As always, keep your eyes peeled right here on AIinASIA for more updates! We’ll be diving deeper into how these AI skirmishes play out on the global stage—because if there’s one thing we love around here, it’s a good AI battle royale.

Let’s Talk AI!

How are you preparing for the AI-driven future? What questions are you training yourself to ask? Drop your thoughts in the comments, share this with your network, and subscribe for more deep dives into AI’s impact on work, life, and everything in between.

You may also like:

- DeepSeek’s Rise: The $6M AI Disrupting Silicon Valley’s Billion-Dollar Game

- Perplexity Assistant: The New AI Contender Taking on ChatGPT and Gemini

- Or try the free version of Deepseek now by tapping here.

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

You may like

-

Can PwC’s new Agent OS Really Make AI Workflows 10x Faster?

-

OpenAI’s New ChatGPT Image Policy: Is AI Moderation Becoming Too Lax?

-

Tencent Takes on DeepSeek: Meet the Lightning-Fast Hunyuan Turbo S

-

Perplexity’s Deep Research Tool is Reshaping Market Dynamics

-

DeepSeek Dilemma: AI Ambitions Collide with South Korean Privacy Safeguards

-

Beginner’s Guide to Using Sora AI Video

News

OpenAI’s New ChatGPT Image Policy: Is AI Moderation Becoming Too Lax?

ChatGPT now generates previously banned images of public figures and symbols. Is this freedom overdue or dangerously permissive?

Published

3 weeks agoon

March 30, 2025By

AIinAsia

TL;DR – What You Need to Know in 30 Seconds

- ChatGPT can now generate images of public figures, previously disallowed.

- Requests related to physical and racial traits are now accepted.

- Controversial symbols are permitted in strictly educational contexts.

- OpenAI argues for nuanced moderation rather than blanket censorship.

- Move aligns with industry trends towards relaxed content moderation policies.

Is AI Moderation Becoming Too Lax?

ChatGPT just got a visual upgrade—generating whimsical Studio Ghibli-style images that quickly became an internet sensation. But look beyond these charming animations, and you’ll see something far more controversial: OpenAI has significantly eased its moderation policies, allowing users to generate images previously considered taboo. So, is this a timely move towards creative freedom or a risky step into a moderation minefield?

ChatGPT’s new visual prowess

OpenAI’s latest model, GPT-4o, introduces impressive image-generation capabilities directly inside ChatGPT. With advanced photo editing, sharper text rendering, and improved spatial representation, ChatGPT now rivals specialised image AI tools.

But the buzz isn’t just about cartoonish visuals; it’s about OpenAI’s major shift on sensitive content moderation.

Moving beyond blanket bans

Previously, if you asked ChatGPT to generate an image featuring public figures—say Donald Trump or Elon Musk—it would simply refuse. Similarly, requests for hateful symbols or modifications highlighting racial characteristics (like “make this person’s eyes look more Asian”) were strictly off-limits.

No longer. Joanne Jang, OpenAI’s model behaviour lead, explained the shift clearly:

“We’re shifting from blanket refusals in sensitive areas to a more precise approach focused on preventing real-world harm. The goal is to embrace humility—recognising how much we don’t know, and positioning ourselves to adapt as we learn.”

In short, fewer instant rejections, more nuanced responses.

Exactly what’s allowed now?

With this update, ChatGPT can now depict public figures upon request, moving away from selectively policing celebrity imagery. OpenAI will allow individuals to opt-out if they don’t want AI-generated images of themselves—shifting control back to users.

Controversially, ChatGPT also now accepts previously prohibited requests related to sensitive physical traits, like ethnicity or body shape adjustments, sparking fresh debate around ethical AI usage.

Handling the hottest topics

OpenAI is cautiously permitting requests involving controversial symbols—like swastikas—but only in neutral or educational contexts, never endorsing harmful ideologies. GPT-4o also continues to enforce stringent protections, especially around images involving children, setting even tighter standards than its predecessor, DALL-E 3.

Yet, loosening moderation around sensitive imagery has inevitably reignited fierce debates over censorship, freedom of speech, and AI’s ethical responsibilities.

A strategic shift or political move?

OpenAI maintains these changes are non-political, emphasising instead their longstanding commitment to user autonomy. But the timing is provocative, coinciding with increasing regulatory pressure and scrutiny from politicians like Republican Congressman Jim Jordan, who recently challenged tech companies about perceived biases in AI moderation.

This relaxation of restrictions echoes similar moves by other tech giants—Meta and X have also dialled back content moderation after facing similar criticisms. AI image moderation, however, poses unique risks due to its potential for widespread misinformation and cultural distortion, as Google’s recent controversy over historically inaccurate Gemini images has demonstrated.

What’s next for AI moderation?

ChatGPT’s new creative freedom has delighted users, but the wider implications remain uncertain. While memes featuring beloved animation styles flood social media, this same freedom could enable the rapid spread of less harmless imagery. OpenAI’s balancing act could quickly draw regulatory attention—particularly under the Trump administration’s more critical stance towards tech censorship.

The big question now: Where exactly do we draw the line between creative freedom and responsible moderation?

Let us know your thoughts in the comments below!

You may also like:

- China’s Bold Move: Shaping Global AI Regulation with Watermarks

- China’s Bold Move: Shaping Global AI Regulation with Watermarks

- Or try ChatGPT now by tapping here.

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

News

Tencent Joins China’s AI Race with New T1 Reasoning Model Launch

Tencent launches its powerful new T1 reasoning model amid growing AI competition in China, while startup Manus gains major regulatory and media support.

Published

4 weeks agoon

March 27, 2025By

AIinAsia

TL;DR – What You Need to Know in 30 Seconds

- Tencent has launched its upgraded T1 reasoning model

- Competition heats up in China’s AI market

- Beijing spotlights Manus

- Manus partners with Alibaba’s Qwen AI team

The Tencent T1 Reasoning Model Has Launched

Tencent has officially launched the upgraded version of its T1 reasoning model, intensifying competition within China’s already bustling artificial intelligence sector. Announced on Friday (21 March), the T1 reasoning model promises significant enhancements over its preview edition, including faster responses and improved processing of lengthy texts.

In a WeChat announcement, Tencent highlighted T1’s strengths, noting it “keeps the content logic clear and the text neat,” while maintaining an “extremely low hallucination rate,” referring to the AI’s tendency to generate accurate, reliable outputs without inventing false information.

The Turbo S Advantage

The T1 model is built on Tencent’s own Turbo S foundational language technology, introduced last month. According to Tencent, Turbo S notably outpaces competitor DeepSeek’s R1 model when processing queries, a claim backed up by benchmarks Tencent shared in its announcement. These tests showed T1 leading in several key knowledge and reasoning categories.

Tencent’s latest launch comes amid heightened rivalry sparked largely by DeepSeek, a Chinese startup whose powerful yet affordable AI models recently stunned global tech markets. DeepSeek’s success has spurred local companies like Tencent into accelerating their own AI investments.

Beijing Spotlights Rising AI Star Manus

The race isn’t limited to tech giants. Manus, a homegrown AI startup, also received a major boost from Chinese authorities this week. On Thursday, state broadcaster CCTV featured Manus for the first time, comparing its advanced AI agent technology favourably against more traditional chatbot models.

Manus became a sensation globally after unveiling what it claims to be the world’s first truly general-purpose AI agent, capable of independently making decisions and executing tasks with minimal prompting. This autonomy differentiates it sharply from existing chatbots such as ChatGPT and DeepSeek.

Crucially, Manus has now cleared significant regulatory hurdles. Beijing’s municipal authorities confirmed that a China-specific version of Manus’ AI assistant, Monica, is fully registered and compliant with the country’s strict generative AI guidelines, a necessary step before public release.

Further strengthening its domestic foothold, Manus recently announced a strategic partnership with Alibaba’s Qwen AI team, a collaboration likely to accelerate the rollout of Manus’ agent technology across China. Currently, Manus’ agent is accessible only via invite codes, with an eager waiting list already surpassing two million.

The Race Has Only Just Begun

With Tencent’s T1 now officially in play and Manus gaining momentum, China’s AI competition is clearly heating up, promising exciting innovations ahead. As tech giants and ambitious startups alike push boundaries, China’s AI landscape is becoming increasingly dynamic—leaving tech enthusiasts and investors eagerly watching to see who’ll take the lead next.

What do YOU think?

Could China’s AI startups like Manus soon disrupt Silicon Valley’s dominance, or will giants like Tencent keep the competition at bay?

You may also like:

Tencent Takes on DeepSeek: Meet the Lightning-Fast Hunyuan Turbo S

DeepSeek in Singapore: AI Miracle or Security Minefield?

Alibaba’s AI Ambitions: Fueling Cloud Growth and Expanding in Asia

Learn more by tapping here to visit the Tencent website.

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

News

Google’s Gemini AI is Coming to Your Chrome Browser — Here’s the Inside Scoop

Google is integrating Gemini AI into Chrome browser through a new experimental feature called Gemini Live in Chrome (GLIC). Here’s everything you need to know.

Published

4 weeks agoon

March 25, 2025By

AIinAsia

TL;DR – What You Need to Know in 30 Seconds

- Google is integrating Gemini AI into its Chrome browser via an experimental feature called Gemini Live in Chrome (GLIC).

- GLIC adds a clickable Gemini icon next to Chrome’s window controls, opening a floating AI assistant modal.

- Currently being tested in Chrome Canary, the feature aims to streamline AI interactions without leaving the browser.

Welcoming Google’s Gemini AI to Your Chrome Browser

If there’s one thing tech giants love more than AI right now, it’s finding new ways to shove that AI into everything we use. And Google—never one to be left behind—is apparently stepping up their game by sliding their Gemini AI directly into your beloved Chrome browser. Yep, that’s the buzz on the digital street!

This latest AI adventure popped up thanks to eagle-eyed folks at Windows Latest, who spotted intriguing code snippets hidden in Google’s Chrome Canary version. Canary, if you haven’t played with it before, is Google’s playground version of Chrome. It’s the spot where they test all their wild and wonderful experimental features, and it looks like Gemini’s next up on stage.

Say Hello to GLIC: Gemini Live in Chrome

They’re calling this new integration “GLIC,” which stands for “Gemini Live in Chrome.” (Yes, tech companies never resist a snappy acronym, do they?) According to the early glimpses from Canary, GLIC isn’t quite ready for primetime yet—no shock there—but the outlines are pretty clear.

Once activated, GLIC introduces a nifty Gemini icon neatly tucked up beside your usual minimise, maximise, and close window buttons. Click it, and a floating Gemini assistant modal pops open, ready and waiting for your prompts, questions, or random curiosities.

Prefer a less conspicuous spot? Google’s thought of that too—GLIC can also nestle comfortably in your system tray, offering quick access to Gemini without cluttering your browser interface.

Why Gemini in Chrome Actually Makes Sense

Having Gemini hanging out front and centre in Chrome feels like a smart move—especially when you’re knee-deep in tabs and need quick answers or creative inspiration on the fly. No more toggling between browser tabs or separate apps; your AI assistant is literally at your fingertips.

But let’s keep expectations realistic here—this is still Canary we’re talking about. Features here often need plenty of polish and tweaking before making it to the stable Chrome we all rely on. But the potential? Definitely exciting.

What’s Next?

For now, we’ll keep a close eye on GLIC’s developments. Will Gemini revolutionise how we interact with Chrome, or will it end up another quirky experiment? Either way, Google’s bet on AI is clearly ramping up, and we’re here for it. Don’t forget to sign up to our occasional newsletter to stay informed about this and other happenings around AI in Asia and beyond.

Stay tuned—we’ll share updates as soon as Google lifts the curtains a bit further.

You may also like:

- Revolutionising Search: Google’s New AI Features in Chrome

- Google Gemini: How To Maximise Its Potential

- Google Gemini: The Future of AI

- Try Google Carnary by tapping here — be warned, it can be unstable!

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

AI Career Guide: Land Your Next Job with Our AI Playbook

Will AI Take Your Job—or Supercharge Your Career?

Can AI Videos Really Boost Your Brand’s Authenticity?

Trending

-

Business3 weeks ago

Business3 weeks agoCan PwC’s new Agent OS Really Make AI Workflows 10x Faster?

-

Life2 weeks ago

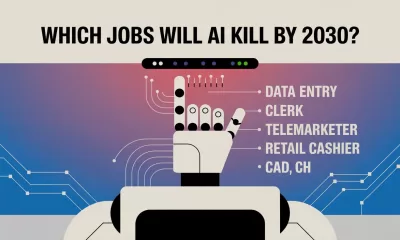

Life2 weeks agoWhich Jobs Will AI Kill by 2030? New WEF Report Reveals All

-

Life2 weeks ago

Life2 weeks agoAI Career Guide: Land Your Next Job with Our AI Playbook

-

Business2 weeks ago

Business2 weeks agoWill AI Take Your Job—or Supercharge Your Career?

-

Marketing2 weeks ago

Marketing2 weeks agoWill AI Kill Your Marketing Job by 2030?

-

Tools2 weeks ago

Tools2 weeks agoCan AI Videos Really Boost Your Brand’s Authenticity?

-

Business2 weeks ago

Business2 weeks agoThe Three AI Markets Shaping Asia’s Future

-

Business3 weeks ago

Business3 weeks agoEmbrace AI or Face Replacement—Grab CEO Anthony Tan’s Stark Warning