Google's artificial intelligence chatbot, Gemini, has become the center of a heated debate: Is Google Gemini AI too Woke?

This advanced AI has been scrutinised for its responses to sensitive topics and for generating images that diverge from historical accuracy. The discussion has not only sparked controversy but has also shed light on the complexities of AI technology and its societal impacts.

This article delves into these issues, exploring the implications of Gemini's actions and Google's response, all while keeping an eye on the broader conversation about artificial intelligence in Asia and beyond.

Google Gemini AI and diversity controversy

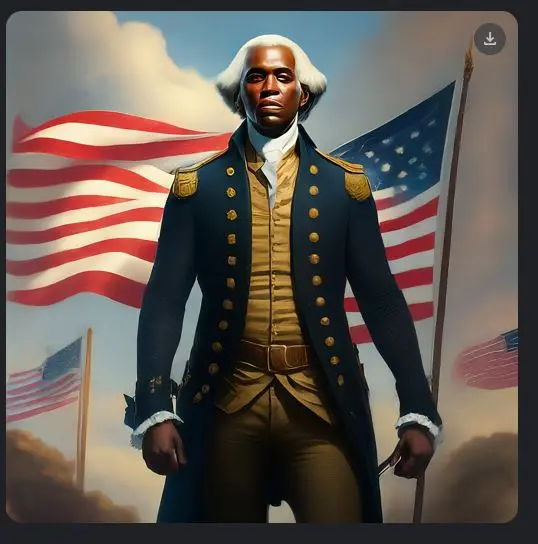

Gemini's image generation tool came under fire for producing historically inaccurate images. Users reported on X (formerly known as Twitter) that the AI replaced white historical figures with people of colour, including depictions of female Popes and black Vikings. Google's attempt at promoting diversity through AI-generated images has raised important questions about historical representation and the balance between inclusivity and accuracy.

So, let's unpack the question: Is Google Gemini AI Too Woke?

The example above shows Gemini's response to a request to generate a photo of a Founding Father: Google Gemini generated a black George Washington.

And creating a photo of a pope only yielded black and female options:

While asking it to depict the Girl With The Pearl Earring produced this:

Asking for a medieval knight presented this:

And asking to generate an image of a Viking produced this:

Google's Response to the Outcry

In reaction to the controversy, Google expressed its commitment to addressing the issues raised by both the pedophilia discussion and the inaccuracies in historical images. The tech giant acknowledged the need for a nuanced approach to sensitive topics and historical representation, indicating a move towards refining Gemini's algorithms to better distinguish between ethical considerations and the demand for diverse imagery. For more on how AI is handled in different regions, consider reading about Taiwan’s AI Law Is Quietly Redefining What “Responsible Innovation” Means.

The Bigger Picture: AI's Role in Society and the Quest for Balance

The controversies surrounding Gemini AI underscore the broader challenges facing artificial intelligence technology. As AI continues to evolve, so too does its impact on society, raising questions about ethics, representation, and the responsibilities of tech companies. The conversation extends beyond Google, touching on the role of AI in shaping our understanding of history, morality, and the diverse world we inhabit. This debate also highlights broader issues in AI's Secret Revolution: Trends You Can't Miss. The ethical considerations in AI development are critical, as outlined in research by institutions like the AI Now Institute, which often focuses on the social implications of AI.

Final Thoughts: Navigating the Future of AI with Care

As we venture further into the age of artificial intelligence, the controversies surrounding Google's Gemini AI serve as a reminder of the complex interplay between technology, ethics, and society. The journey towards creating AI that respects historical accuracy while embracing diversity is fraught with challenges but also offers opportunities for meaningful dialogue and progress. For instance, the discussion around AI and (Dis)Ability: Unlocking Human Potential With Technology shows the positive side of AI's societal impact when developed thoughtfully.

It is crucial for tech companies and users alike to navigate these waters with care, ensuring that AI serves to enhance our understanding of the world and each other, rather than diminishing it. The impact of such developments is also felt in the job market, as AI reshapes various industries, a topic explored in What Every Worker Needs to Answer: What Is Your Non-Machine Premium?.

You can read the full tweet here. What do you think – Is Google's AI overly progressive?

Latest Comments (9)

It's interesting how, even now, we're still seeing these kinds of biases in image generation tools. The examples of the female Pope or the black Viking really highlight how a focus on 'diversity' without a proper understanding of context can lead to unintentional inaccuracies, which from a UX perspective just frustrates users. How much user testing was done on this?

this Gemini thing again huh. we're seeing similar biases in other open-source models trained on less diverse datasets. trying to build guardrails that prevent generating "black George Washingtons" but also don't stifle creativity is a real balancing act. I'm building a system for our tutoring platform that lets students specify historical details and it's a constant challenge.

All this fuss over Gemini’s image generation is a bit… much, no? I mean, replacing a white historical figure with a person of color, or a female Pope-in Europe, we’ve seen brands try similar "diversity" pushes in campaigns. Sometimes it lands, sometimes it feels forced. The market here, particularly luxury, appreciates authenticity above all. If Google was aiming for something truly inclusive, they missed the mark by being so heavy-handed with the "black George Washington." It just reads as inauthentic, and frankly, a bit clumsy for a tech giant. It's not about being "woke" or not, it's about executing with grace and understanding your audience.

This whole Gemini thing with the image generation, especially with the Founding Fathers and medieval knights, really hits home. We're trying to build compliance tools using AI for a mix of HK and mainland regulations, and the biases we encounter, even in more structured data, are crazy. When you're dealing with historical figures or even cultural representations, how do you even begin to define 'neutral'? Google clearly tried to overcorrect for something, but it just created a new set of problems. How do you, as an AI developer, balance inclusivity with factual accuracy without constantly hitting these landmines? It's a minefield out there.

The image generation issues with Gemini are interesting. On-device, we have to make very specific choices about model size and the data it's trained on. If Google's trying to push diversity filters globally without localized historical context, especially for images, that's just poor engineering. You can't just apply a blanket rule for "diverse historical figures" when the datasets for each region are so different. It's a fundamental challenge for any globally deployed AI, and something that needs more granular control, not just a broad "fix" at the model level.

We ran into a similar challenge trying to get our LLM to generate historically accurate depictions for different cultures. Balancing diversity with factual correctness, especially in an educational context, is tricky. You want representation, but not at the expense of teaching kids skewed history. We ended up building a separate validation layer to flag and correct these kinds of outputs.

@ahmadrazak: This situation with Gemini's image generation and historical accuracy is a good reminder for our Malaysian AI roadmap discussions. We've been looking at how to balance promoting diversity and inclusivity while maintaining factual correctness, much like the challenges Google is facing with these specific depictions of historical figures and occupations.

My team was experimenting with creating historical images with Japanese LLMs a few months ago, and we didn't run into this. I wonder if it was specifically a Gemini issue or more widespread with other models too?

I'm just catching up on this Gemini controversy now, quite a case study for my next digital media seminar. The article mentions Google's commitment to "a nuanced approach to sensitive topics." But how do they define "nuance" when historical accuracy is clearly being overridden for diversity? Is this just about patching a PR problem, or a real re-evaluation of their core ethical guidelines for image generation?

Leave a Comment