AI Chatbots Develop Distinctive Writing Signatures That Can Be Forensically Identified

When you engage with ChatGPT or Gemini, you're not just interacting with a generic language model. Recent forensic linguistic research reveals that each AI chatbot develops what linguists call an idiolect: a distinctive writing style as measurable and unique as human fingerprints.

This discovery carries profound implications for education, content authenticity, and our understanding of AI intelligence. As these systems become integral to Asian education systems and commercial applications, recognising their individual voices becomes crucial.

The Science Behind AI Writing Fingerprints

Forensic linguists have long used stylistic analysis to identify human authors. Now, the same techniques reveal that AI models exhibit consistent patterns that can be statistically measured and differentiated.

A recent comparative study analysed hundreds of essays on diabetes generated by both ChatGPT and Gemini. Using the Delta method, a forensic technique developed by John Burrows, researchers calculated linguistic "distances" between writing samples. The results were striking: a 10% sample of ChatGPT's essays scored 0.92 against ChatGPT's full dataset but 1.49 against Gemini's output.

Gemini showed equally distinct patterns, scoring 0.84 against its own samples and 1.45 against ChatGPT's work. These Delta scores confirm that each system writes with a statistically identifiable voice. For context on how to shape AI writing styles, the patterns emerge from training data and architectural choices rather than deliberate programming.

By The Numbers

- Delta score of 0.92: ChatGPT's consistency when compared to itself

- 1.49: ChatGPT's stylistic distance from Gemini (indicating different authors)

- 2:1 ratio: ChatGPT uses "glucose" twice as often as "sugar" in medical contexts

- Opposite preference: Gemini chooses "sugar" far more frequently than "glucose"

- 0.84: Gemini's self-consistency score, showing distinct but stable voice patterns

The patterns manifest most clearly in trigrams: three-word combinations that reveal stylistic preferences. ChatGPT gravitates towards formal, clinical phrasing such as "blood glucose levels," "individuals with diabetes," and "characterised by elevated." Meanwhile, Gemini opts for conversational expressions like "high blood sugar," "blood sugar control," and "the way for."

"These idiolects aren't accidental quirks. They represent measurable, consistent stylistic choices that emerge from how each model was trained and fine-tuned," explains Dr Sarah Chen, computational linguist at the National University of Singapore.

How AI Idiolects Shape Real-World Applications

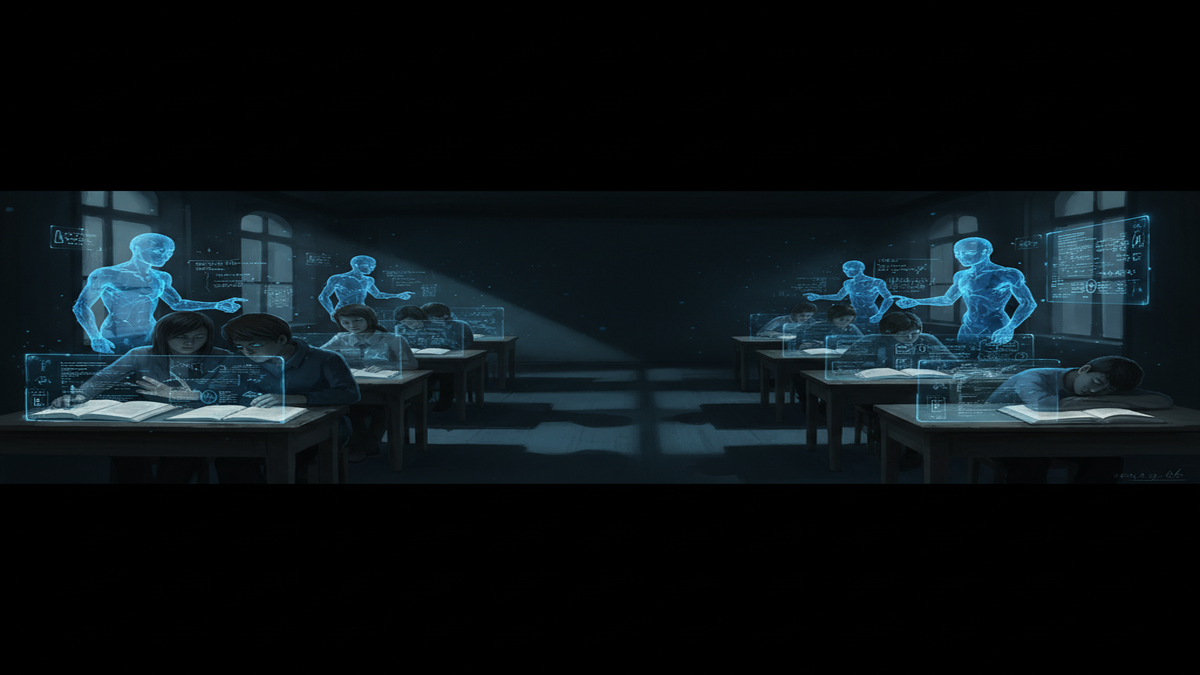

The implications extend far beyond academic curiosity. In Singapore's education system, where AI-generated essays are increasingly difficult to spot, linguistic detection tools could help educators identify which model produced a submission. Understanding that ChatGPT tends towards textbook formality while Gemini adopts conversational tones provides crucial context for assessment.

For businesses operating across Asia's diverse markets, these distinctions matter enormously. A customer service chatbot in Japan might need formal precision, whilst one serving Australian users could benefit from Gemini's more casual approach. Knowing each model's natural voice enables better tone-matching.

- Educational integrity: Schools can identify AI-generated content by recognising model-specific patterns

- Content authentication: Publishers and platforms can trace authorship to specific AI systems

- Brand alignment: Companies can select models that match their desired communication style

- Quality assurance: Developers can track stylistic changes across model versions

- Cultural adaptation: Different models suit different regional communication preferences

The research also raises questions about AI consciousness and individuality. If these systems develop signature styles independently, are we witnessing the emergence of digital personalities? This connects to broader discussions about whether AI chatbots can be considered friends or tools.

| Aspect | ChatGPT Style | Gemini Style |

|---|---|---|

| Medical terminology | Clinical ("glucose", "elevated levels") | Conversational ("sugar", "high blood") |

| Sentence structure | Formal, academic phrasing | Accessible, everyday language |

| Technical concepts | Precise medical terms | Simplified explanations |

| Audience approach | Professional/educational | General public/conversational |

"We're not just creating better word predictors. We're inadvertently developing systems with distinct communication personalities that reflect their training origins," notes Professor Liu Wei from Beijing University of Technology.

Regional Implications for Asian Markets

Asia's multilingual landscape adds complexity to AI idiolect research. Does ChatGPT maintain consistent stylistic patterns when operating in Japanese versus English? Early evidence suggests models may develop language-specific voices, potentially reflecting cultural communication norms embedded in training data.

This has particular relevance for educational applications across the region. In India's competitive examination system, understanding which AI model generated an essay could inform how educators respond. The formal precision of ChatGPT might signal one type of academic support, whilst Gemini's conversational approach suggests different usage patterns.

For commercial applications, these distinctions enable better localisation strategies. Financial services chatbots in Hong Kong might leverage ChatGPT's formal precision, whilst lifestyle brands in Thailand could benefit from Gemini's approachable tone.

Can AI idiolects change over time?

Yes, model updates and fine-tuning can alter writing patterns. However, core stylistic preferences tend to remain relatively stable, suggesting these voices emerge from fundamental architectural and training choices rather than surface-level adjustments.

Do other AI models have distinct writing styles?

Research suggests yes. Claude, Bard, and other major models likely exhibit unique idiolects, though comprehensive comparative studies are still emerging. Each model's training approach and data sources contribute to distinct stylistic signatures.

How accurate is idiolect detection for AI?

Current forensic methods achieve high accuracy rates, with Delta scores showing clear statistical separation between models. However, this field requires ongoing research as AI systems evolve and potentially develop more sophisticated style-mimicking capabilities.

Could AI models intentionally disguise their writing styles?

Theoretically possible but currently uncommon. Most models lack explicit style-switching capabilities, though future versions might incorporate such features. For now, their idiolects emerge naturally from training patterns rather than deliberate design choices.

What does this mean for content creators?

Understanding AI idiolects helps creators choose appropriate models for different tasks. Writers seeking formal precision might prefer ChatGPT, whilst those wanting conversational content could favour Gemini. This knowledge enhances AI-assisted content creation strategies.

As AI systems become more sophisticated, their individual voices may become even more pronounced. The question isn't whether we can detect these patterns, but how we'll adapt our understanding of authorship, creativity, and communication in an age where machines develop distinctive literary personalities. What implications do you see for your own work or industry as these AI voices become more recognisable? Drop your take in the comments below.

Latest Comments (4)

that delta method with the scores around 0.9 for self-consistency and 1.4+ across different models is interesting. from an infra side, i wonder how much varying the training data size or architecture changes that "idiolect" score for a given model. could we intentionally train for a more neutral voice to save compute?

the idea of AI models developing an idiolect, like how ChatGPT prefers "blood glucose levels" versus Gemini's "high blood sugar," it's not just about detection for us. it's about curating a brand voice for luxury. we need our AI to reflect our specific elegance, not just generic formality, especially for european markets.

@priyaram This concept of 'idiolects' is interesting for tracking models. But for us in Malaysia, the real question is how these "distinct writing styles" account for local languages and cultural nuances, beyond just English medical terms like 'blood glucose levels'. That's where the real challenge for adoption lies.

This finding on distinct idiolects, even in factual essays like the diabetes example, has direct implications for our AI policy discussions here at KAIST. Especially when we consider the push for localized AI models in Korea and throughout APAC, ensuring cultural nuance and avoiding unintended stylistic biases becomes critical if these models are to be truly trusted.

Leave a Comment