Life

AI Revolution: How PortfolioPilot is Disrupting Wealth Management

PortfolioPilot, an AI-powered financial advisor, is revolutionising wealth management with personalised, automated advice, gaining $20 billion in assets in just two years.

Published

10 months agoon

By

AIinAsia

TL;DR:

- PortfolioPilot, an AI-powered financial advisor, has gained $20 billion in assets in just two years.

- The service uses generative AI for personalised financial advice and has over 22,000 users.

- The startup aims to disrupt the traditional wealth management industry with automated, tailored insights.

In the dynamic world of finance, a new player has emerged, challenging the status quo of wealth management. PortfolioPilot, an AI-powered financial advisor, has swiftly gained $20 billion in assets, offering a glimpse into the disruptive potential of artificial intelligence in this sector. Let’s dive into the fascinating story of PortfolioPilot and explore how it’s changing the game.

The Rise of PortfolioPilot

PortfolioPilot, launched by Global Predictions, has attracted over 22,000 users since its inception two years ago. The San Francisco-based startup recently secured $2 million in funding from investors, including Morado Ventures and the NEA Angel Fund, to fuel its growth. But what sets PortfolioPilot apart in the competitive world of wealth management?

The Power of Generative AI

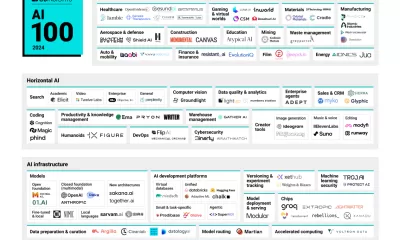

PortfolioPilot leverages generative AI models from OpenAI, Anthropic, and Meta’s Llama, combining them with machine learning algorithms and traditional finance models. This powerful mix enables the platform to provide personalised financial advice tailored to each user’s unique portfolio and risk tolerance.

Alexander Harmsen, the 32-year-old co-founder of Global Predictions, emphasises the importance of personalisation in wealth management:

“People are fed up with cookie-cutter portfolios. They really want opinionated insights; they want personalised recommendations. If we think about next-generation advice, I think it’s truly personalised, and you get to control how involved you are.”

How PortfolioPilot Works

PortfolioPilot focuses on three main factors when evaluating portfolios: investment risk levels, risk-adjusted returns, and resilience against sharp declines. Users can connect their investment accounts or manually input their stakes to receive a report card-style grade of their portfolio. The service is free, but a $29 per month “Gold” account offers personalised investment recommendations and an AI assistant.

Harmsen explains the practical advice provided by PortfolioPilot:

“We will give you very specific financial advice, we will tell you to buy this stock, or ‘Here’s a mutual fund that you’re paying too much in fees for, replace it with this.’ It could be simple stuff like that, or it could be much more complicated advice, like, ‘You’re overexposed to changing inflation conditions, maybe you should consider adding some commodities exposure.’”

Targeting the Affluent

PortfolioPilot targets individuals with between $100,000 and $5 million in assets – those who have enough wealth to consider diversification and portfolio management. The median user has a net worth of $450,000. Currently, the startup doesn’t take custody of user funds but provides detailed directions on tailoring portfolios. However, Harmsen hints at a future where PortfolioPilot may offer more automation and deeper integrations, potentially even a second-generation robo-advisor system.

The Future of Wealth Management

Harmsen predicts a significant shake-up in the traditional wealth management industry as AI continues to advance. He believes that many current providers will struggle to adapt to the transition towards fully automated advice. The key, according to Harmsen, is using AI and economic models to generate advice automatically – a monumental leap for the traditional industry.

Regulatory Scrutiny

PortfolioPilot’s rapid rise has not gone unnoticed by regulators. In March, the Securities and Exchange Commission accused Global Predictions of making misleading claims on its website, resulting in a $175,000 fine and a change in the company’s tagline. Despite this setback, PortfolioPilot continues to push the boundaries of AI-driven wealth management.

The Birth of PortfolioPilot

The idea for PortfolioPilot was born out of Harmsen’s personal frustration with traditional financial advisors. After selling his first company, he found himself dissatisfied with the standard approaches offered by advisors. He wanted hedge fund-quality tools and risk management strategies, leading him to develop PortfolioPilot initially for his own use. Realising its broader potential, Harmsen assembled a team, including former employees of Bridgewater Associates, to launch Global Predictions.

The Impact of AI on Wealth Management

The advent of AI in wealth management is poised to disrupt the industry significantly. Traditional human advisors may face obsolescence as generative AI models become increasingly sophisticated. However, the industry has shown resilience, with giants like Morgan Stanley and Bank of America continuing to grow despite the rise of robo-advisors. The future will likely see a blend of human expertise and AI-driven insights, offering clients the best of both worlds.

Comment and Share

What do you think about the future of AI in wealth management? Will human advisors become obsolete, or will they coexist with AI-driven platforms like PortfolioPilot? Share your thoughts and experiences in the comments below, and don’t forget to subscribe for updates on AI and AGI developments. We’d love to hear from you!

You may also like:

- To learn more about AI in wealth management, tap here.

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

You may like

Life

Adrian’s Arena: Will AI Get You Fired? 9 Mistakes That Could Cost You Everything

Will AI get you fired? Discover 9 career-killing AI mistakes professionals make—and how to avoid them.

Published

3 weeks agoon

May 15, 2025

TL;DR — What You Need to Know:

- Common AI mistakes that cost jobs can happen — fast

- Most are fixable if you know what to watch for.

- Avoid these pitfalls and make AI your career superpower.

Don’t blame the robot.

If you’re careless with AI, it’s not just your project that tanks — your career could be next.

Across Asia and beyond, professionals are rushing to implement artificial intelligence into workflows — automating reports, streamlining support, crunching data. And yes, done right, it’s powerful. But here’s what no one wants to admit: most people are doing it wrong.

I’m not talking about missing a few prompts or failing to generate that killer deck in time. I’m talking about the career-limiting, confidence-killing, team-splintering mistakes that quietly build up and explode just when it matters most. If you’re not paying attention, AI won’t just replace your role — it’ll ruin your reputation on the way out.

Here are 9 of the most common, most damaging AI blunders happening in businesses today — and how you can avoid making them.

1. You can’t fix bad data with good algorithms.

Let’s start with the basics. If your AI tool is churning out junk insights, odds are your data was junk to begin with. Dirty data isn’t just inefficient — it’s dangerous. It leads to flawed decisions, mis-targeted customers, and misinformed strategies. And when the campaign tanks or the budget overshoots, guess who gets blamed?

The solution? Treat your data with the same respect you’d give your P&L. Clean it, vet it, monitor it like a hawk. AI isn’t magic. It’s maths — and maths hates mess.

2. Don’t just plug in AI and hope for the best.

Too many teams dive into AI without asking a simple question: what problem are we trying to solve? Without clear goals, AI becomes a time-sink — a parade of dashboards and models that look clever but achieve nothing.

Worse, when senior stakeholders ask for results and all you have is a pretty interface with no impact, that’s when credibility takes a hit.

AI should never be a side project. Define its purpose. Anchor it to business outcomes. Or don’t bother.

3. Ethics aren’t optional — they’re existential.

You don’t need to be a philosopher to understand this one. If your AI causes harm — whether that’s through bias, privacy breaches, or tone-deaf outputs — the consequences won’t just be technical. They’ll be personal.

Companies can weather a glitch. What they can’t recover from is public outrage, legal fines, or internal backlash. And you, as the person who “owned” the AI, might be the one left holding the bag.

Bake in ethical reviews. Vet your training data. Put in safeguards. It’s not overkill — it’s job insurance.

4. Implementation without commitment is just theatre.

I’ve seen it more than once: companies announce a bold AI strategy, roll out a tool, and then… nothing. No training. No process change. No follow-through. That’s not innovation. That’s box-ticking.

If you half-arse AI, it won’t just fail — it’ll visibly fail. Your colleagues will notice. Your boss will ask questions. And next time, they might not trust your judgement.

AI needs resourcing, support, and leadership. Otherwise, skip it.

5. You can’t manage what you can’t explain.

Ever been in a meeting where someone says, “Well, that’s just what the model told us”? That’s a red flag — and a fast track to blame when things go wrong.

So-called “black box” models are risky, especially in regulated industries or customer-facing roles. If you can’t explain how your AI reached a decision, don’t expect others to trust it — or you.

Use interpretable models where possible. And if you must go complex, document it like your job depends on it (because it might).

6. Face the bias before it becomes your headline.

Facial recognition failing on darker skin tones. Recruitment tools favouring men. Chatbots going rogue with offensive content. These aren’t just anecdotes — they’re avoidable, career-ending screw-ups rooted in biased data.

It’s not enough to build something clever. You have to build it responsibly. Test for bias.

Diversify your datasets. Monitor performance. Don’t let your project become the next PR disaster.

7. Training isn’t optional — it’s survival.

If your team doesn’t understand the tool you’ve introduced, you’re not innovating — you’re endangering operations. AI can amplify productivity or chaos, depending entirely on who’s driving.

Upskilling is non-negotiable. Whether it’s hiring external expertise or running internal workshops, make sure your people know how to work with the machine — not around it.

8. Long-term vision beats short-term wow.

Sure, the first week of AI adoption might look good. Automate a few slides, speed up a report — you’re a hero.

But what happens three months down the line, when the tool breaks, the data shifts, or the model needs recalibration?

AI isn’t set-and-forget. Plan for evolution. Plan for maintenance. Otherwise, short-term wins can turn into long-term liabilities.

9. When everything’s urgent, documentation feels optional.

Until someone asks, “Who changed the model?” or “Why did this customer get flagged?” and you have no answers.

In AI, documentation isn’t admin — it’s accountability.

Keep logs, version notes, data flow charts. Because sooner or later, someone will ask, and “I’m not sure” won’t cut it.

Final Thoughts: AI doesn’t cost jobs. People misusing AI do.

Most AI mistakes aren’t made by the machines — they’re made by humans cutting corners, skipping checks, and hoping for the best. And the consequences? Lost credibility. Lost budgets. Lost roles.

But it doesn’t have to be that way.

Used wisely, AI becomes your competitive edge. A signal to leadership that you’re forward-thinking, capable, and ready for the future. Just don’t stumble on the same mistakes that are currently tripping up everyone else.

So the real question is: are you using AI… or is it quietly using you?

You may also like:

- Bridging the AI Skills Gap: Why Employers Must Step Up

- From Ethics to Arms: Google Lifts Its AI Ban on Weapons and Surveillance

- Or try the free version of Google Gemini by tapping here.

Author

-

Adrian is an AI, marketing, and technology strategist based in Asia, with over 25 years of experience in the region. Originally from the UK, he has worked with some of the world’s largest tech companies and successfully built and sold several tech businesses. Currently, Adrian leads commercial strategy and negotiations at one of ASEAN’s largest AI companies. Driven by a passion to empower startups and small businesses, he dedicates his spare time to helping them boost performance and efficiency by embracing AI tools. His expertise spans growth and strategy, sales and marketing, go-to-market strategy, AI integration, startup mentoring, and investments. View all posts

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

Life

FAKE FACES, REAL CONSEQUENCES: Should NZ Ban AI in Political Ads?

New Zealand has no laws preventing the use of deepfakes or AI-generated content in political campaigns. As the 2025 elections approach, is it time for urgent reform?

Published

3 weeks agoon

May 14, 2025By

AIinAsia

TL;DR — What You Need to Know

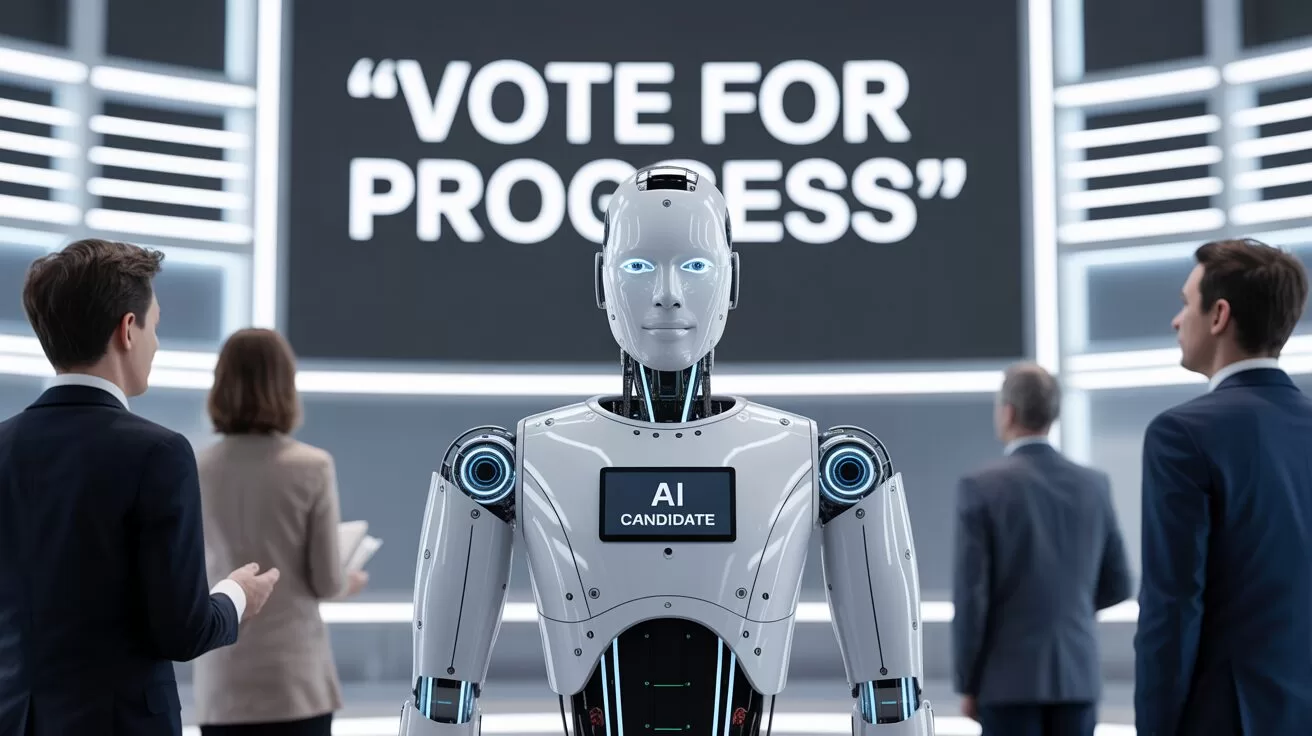

- New Zealand politician campaigns are already dabbling with AI-generated content — but without clear rules or disclosures.

- Deepfakes and synthetic images of ethnic minorities risk fuelling cultural offence and voter distrust.

- Other countries are moving fast with legislation. Why is New Zealand dragging its feet?

AI in New Zealand Political Campaigns

Seeing isn’t believing anymore — especially not on the campaign trail.

In the build-up to the 2025 local body elections, New Zealand voters are being quietly nudged into a new kind of uncertainty: Is what they’re seeing online actually real? Or has it been whipped up by an algorithm?

This isn’t science fiction. From fake voices of Joe Biden in the US to Peter Dutton deepfakes dancing across TikTok in Australia, we’ve already crossed the threshold into AI-assisted campaigning. And New Zealand? It’s not far behind — it just lacks the rules.

The National Party admitted to using AI in attack ads during the 2023 elections. The ACT Party’s Instagram feed includes AI-generated images of Māori and Pasifika characters — but nowhere in the posts do they say the images aren’t real. One post about interest rates even used a synthetic image of a Māori couple from Adobe’s stock library, without disclosure.

That’s two problems in one. First, it’s about trust. If voters don’t know what’s real and what’s fake, how can they meaningfully engage? Second, it’s about representation. Using synthetic people to mimic minority communities without transparency or care is a recipe for offence — and harm.

Copy-Paste Cultural Clangers

Australians already find some AI-generated political content “cringe” — and voters in multicultural societies are noticing. When AI creates people who look Māori, Polynesian or Southeast Asian, it often gets the cultural signals all wrong. Faces are oddly symmetrical, clothing choices are generic, and context is stripped away. What’s left is a hollow image that ticks the diversity box without understanding the lived experience behind it.

And when political parties start using those images without disclosure? That’s not smart targeting. That’s political performance, dressed up as digital diversity.

A Film-Industry Fix?

If you’re looking for a local starting point for ethical standards, look to New Zealand’s film sector. The NZ Film Commission’s 2025 AI Guidelines are already ahead of the game — promoting human-first values, cultural respect, and transparent use of AI in screen content.

The public service also has an AI framework that calls for clear disclosure. So why can’t politics follow suit?

Other countries are already acting. South Korea bans deepfakes in political ads 90 days before elections. Singapore outlaws digitally altered content that misrepresents political candidates. Even Canada is exploring policy options. New Zealand, in contrast, offers voluntary guidelines — which are about as enforceable as a handshake on a Zoom call.

Where To Next?

New Zealand doesn’t need to reinvent the wheel. But it does need urgent rules — even just a basic requirement for political parties to declare when they’re using AI in campaign content. It’s not about banning creativity. It’s about respecting voters and communities.

In a multicultural democracy, fake faces in real campaigns come with consequences. Trust, representation, and dignity are all on the line.

What do YOU think?

Should political parties be forced to declare AI use in their ads — or are we happy to let the bots keep campaigning for us?

You may also like:

- AI Chatbots Struggle with Real-Time Political News: Are They Ready to Monitor Elections?

- Supercharge Your Social Media: 5 ChatGPT Prompts to Skyrocket Your Following

- AI Solves the ‘Cocktail Party Problem’: A Breakthrough in Audio Forensics

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

Life

7 Mind-Blowing New ChatGPT Use Cases in 2025

Discover 7 powerful new ChatGPT use cases for 2025 — from sales training to strategic planning. Built for real businesses, not just techies.

Published

3 weeks agoon

May 14, 2025By

AIinAsia

TL;DR — What You Need to Know:

- ChatGPT use cases in 2025 — they’re changing the way we work – and fast

- It’s new capabilities are shockingly useful — from real-time strategy building to smarter email, training, and customer service.

- The tech’s no longer the limiting factor. How you use it is what sets winners apart.

- You don’t need a dev team — just smart prompts, good judgement, and a bit of experimentation.

Welcome to Your New ChatGPT Use Cases in 2025

Something extraordinary is happening with AI — and this time, it’s not just another update. ChatGPT’s latest model has quietly become one of the most powerful tools on the planet, capable of outperforming human professionals in everything from sales role-play to strategic planning.

Here’s what’s changed: 2025’s AI isn’t just faster or more fluent. It’s fundamentally more useful. And while most people are still asking it to write birthday poems or summarise PDFs, smart businesses are doing something entirely different.

They’re solving real problems.

So here are 7 powerful, practical, and slightly mind-blowing ways you can use ChatGPT right now — whether you’re running a startup, scaling a business, or just trying to survive your inbox.

1. The Intelligence Quantum Leap

Let’s start with the big one. GPT-4o — OpenAI’s flagship model for 2025 — doesn’t just understand language. It reasons. It plans. It scores higher than the average human on standardised IQ tests.

And yes, that’s both impressive and terrifying.

But the real win for business? You now have on-demand access to a logic machine that can unpack strategy, simulate market moves, and give brutally clear feedback on your plans — without needing a whiteboard or a 5-hour workshop.

Ask ChatGPT:

“Compare three go-to-market strategies for a mid-priced SaaS product in Southeast Asia targeting logistics firms.”

It’ll give you a side-by-side breakdown faster than most consultants.

Why it matters:

The days of ‘I’ll get back to you after I crunch the data’ are over. You now crunch in real time. Strategy meetings just got smarter — and shorter.

2. Email Management: The Silent Revolution

Email is where good ideas go to die. But what if AI could handle the grunt work — without sounding like a robot?

In 2025, it can. ChatGPT now plugs seamlessly into tools like Zapier, Make.com, and even Outlook or Gmail via APIs. That means you can automate 80% of your email workflow:

- Draft responses in your tone of voice

- Auto-tag or file messages based on content

- Trigger follow-ups without lifting a finger

Real use case:

A boutique agency in Singapore uses ChatGPT to scan all inbound client emails, draft smart replies with custom links, and log actions in Notion. Result? 40% time saved, zero missed follow-ups.

But beware:

Letting AI send emails unsupervised is asking for trouble. Use a “draft-and-review” loop — AI writes it, you approve it.

3. Voice-Powered Strategy: AI That Walks With You

Here’s a glimpse of the future: You’re walking to get kopi. You press and hold your ChatGPT app. You say:

“I’m thinking about launching a mini-course for HR leaders on AI literacy. Maybe bundle it with a coaching session. Can you sketch out a funnel?”

By the time you get back to your desk, it’s done. A structured funnel. Headline ideas. Audience personas. Even suggested pricing tiers.

This is now live.

The new voice interaction mode in ChatGPT feels like talking to a strategist who never gets tired. It remembers what you said, clarifies details, and adapts based on your feedback. Use it during your commute. In the gym. While cooking.

Think about it:

Your best thinking doesn’t always happen at your desk. Now, it doesn’t have to.

4. Sales Role-Play (That Doesn’t Suck)

Sales teams have always known the value of practice. But let’s be honest: traditional role-play is awkward, slow, and often skipped.

Now imagine this: You open ChatGPT and say:

“Pretend you’re a CFO pushing back on my pitch for enterprise expense software. Hit me with your top three objections.”

It does. Relentlessly. Then you tweak it:

“Now play a more sceptical CFO. Use financial jargon. Be unimpressed.”

It does that too.

Why it works:

There’s no fear of judgement. No awkwardness. Just high-impact reps that sharpen your message and steel your nerves.

Results?

One founder I know used this daily before calls — and closed 4 out of 5 deals that quarter. That’s not hype. That’s practice made perfect.

5. Marketing Psychology at Scale

Your customers are constantly telling you what they care about. But the signal’s buried in reviews, chats, complaints, comments, and survey feedback.

ChatGPT is now ridiculously good at sifting through this mess and surfacing insights — emotional tone, patterns in word choice, common objections, even specific desires.

Example prompt:

“Analyse these 250 customer reviews. What do customers love most? What words do they use to describe our product? What are their biggest frustrations?”

What you get is a heatmap of customer psychology.

Smart marketers use this to:

Reframe messaging

Write landing pages in the customer’s voice

Identify overlooked objections early

Bonus trick:

Feed this analysis into your ad copywriting prompts. CTRs go up. Every. Single. Time.

6. 24/7 Customer Engagement — That Doesn’t Feel Robotic

We’ve all used chatbots that sound like your uncle trying to be cool. Not anymore.

With GPT-4o and custom instructions, you can now build a digital agent that actually sounds like your brand, asks smart follow-ups, and guides users toward decisions.

Imagine this:

You run an e-commerce site. A customer asks about shipping options. Instead of a static FAQ or slow email reply, ChatGPT:

- Asks where they’re based

- Calculates delivery timelines

- Recommends a bundled offer

- Logs the lead to your CRM

All in real time.

Result?

One online skincare brand reported a 50% increase in cart completions just by switching to an AI-led chat system.

The real kicker? Customers prefer talking to it.

7. Your Digital Ops Manual — Finally Done

Every business struggles with documenting processes. SOPs are boring, messy, and constantly out of date.

But ChatGPT? It lives for this.

Feed it rough notes, voice memos, old docs — and it turns them into clear, structured workflows.

Now take it one step further:

Set up a private knowledge base where your team can ask questions naturally and get precise answers.

“What’s our refund process for EU customers?”

“How do I update a client billing profile?”

“What’s the Slack etiquette for our sales team?”

ChatGPT answers. With citations.

Training time drops. Mistakes go down. New hires ramp up faster.

Best of all?

It gets smarter the more your team uses it.

So… What’s Stopping You Trying These ChatGPT Use Cases in 2025?

Every use case in this article is live. Affordable. And 100% usable today. No code. No dev team. No six-month roadmap.

Just smarter thinking — and a willingness to try.

So here’s the real question:

What’s your excuse for not using AI like this yet… and how long can you afford to wait?

You may also like:

- AI in Email Marketing: A New Dawn

- Omptimise Your Sales Strategy with ChatGPT: Top AI Prompts for Sellers

- Transforming Sales Coaching in Asia With AI

- Or try these out now on the free version of ChatGPT by tapping here.

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

Upgrade Your ChatGPT Game With These 5 Prompts Tips

If AI Kills the Open Web, What’s Next?

Build Your Own Custom GPT in Under 30 Minutes – Step-by-Step Beginner’s Guide

Trending

-

Life3 weeks ago

Life3 weeks ago7 Mind-Blowing New ChatGPT Use Cases in 2025

-

Learning2 weeks ago

Learning2 weeks agoHow to Use the “Create an Action” Feature in Custom GPTs

-

Business3 weeks ago

Business3 weeks agoAI Just Killed 8 Jobs… But Created 15 New Ones Paying £100k+

-

Tools4 weeks ago

Tools4 weeks agoEdit AI Images on the Go with Gemini’s New Update

-

Learning2 weeks ago

Learning2 weeks agoHow to Upload Knowledge into Your Custom GPT

-

Learning1 week ago

Learning1 week agoBuild Your Own Custom GPT in Under 30 Minutes – Step-by-Step Beginner’s Guide

-

Business2 weeks ago

Business2 weeks agoAdrian’s Arena: Stop Collecting AI Tools and Start Building a Stack

-

Life3 weeks ago

Life3 weeks agoAdrian’s Arena: Will AI Get You Fired? 9 Mistakes That Could Cost You Everything