Life

The Future of Science: Can AI Automate Research?

AI Scientist is a groundbreaking tool that automates the scientific research process, from reading literature to writing and reviewing papers.

Published

9 months agoon

By

AIinAsia

TL;DR:

- AI Scientist, developed by Sakana AI and academic labs, can perform the full cycle of research from reading literature to writing and reviewing papers.

- The system is limited to the field of machine learning and lacks the ability to conduct laboratory work.

- Experts praise the openness of the project but note that AI Scientist’s outputs are incremental and it has a popularity bias in referencing papers.

The Dawn of AI in Scientific Research

Imagine a world where science is fully automated. A team of machine-learning researchers has taken a significant step towards this future with the creation of AI Scientist. Developed by Sakana AI in Tokyo, along with academic labs in Canada and the United Kingdom, AI Scientist can perform the entire cycle of research—from reading existing literature to formulating hypotheses, conducting experiments, and even writing and reviewing its own papers.

What is AI Scientist?

AI Scientist is a large language model (LLM) designed to automate the scientific process. It starts by reading the literature on a problem and formulating hypotheses for new developments. It then conducts its own ‘experiments’ by running algorithms and measuring their performance. Finally, it produces a paper and evaluates it through an automated peer review process.

Cong Lu, a machine-learning researcher at the University of British Columbia and co-creator of AI Scientist, explains, “To my knowledge, no one has yet done the total scientific community, all in one system.” The results were recently posted on the arXiv preprint server.

The Potential of AI in Science

Jevin West, a computational social scientist at the University of Washington, praises the project. “It’s impressive that they’ve done this end-to-end,” he says. “And I think we should be playing around with these ideas, because there could be potential for helping science.”

However, the output of AI Scientist is not yet groundbreaking. The system can only do research in the field of machine learning and lacks the ability to conduct laboratory work.

Gerbrand Ceder, a materials scientist at Lawrence Berkeley National Laboratory, notes, “There’s still a lot of work to go from AI that makes a hypothesis to implementing that in a robot scientist.”

How AI Scientist Works

AI Scientist uses a technique called evolutionary computation, inspired by Darwinian evolution. It applies small, random changes to an algorithm and selects the ones that improve efficiency. The system then conducts its own ‘experiments’ by running the algorithms and measuring their performance. After producing a paper, it evaluates it through an automated peer review process. This cycle can then repeat, building on its own results.

Criticisms and Limitations

Some researchers have been critical of AI Scientist’s outputs.

One commenter on Hacker News stated, “As an editor of a journal, I would likely desk-reject them. As a reviewer, I would reject them.”

West also points out that AI Scientist has a reductive view of how researchers learn about their field.

“Science is more than a pile of papers,” he says. “You can have a 5-minute conversation that will be better than a 5-hour study of the literature.”

The Future of Automated Science

Despite its limitations, AI Scientist represents a significant step forward in the automation of scientific research. Tom Hope, a computer scientist at the Allen Institute for AI, notes that current LLMs are not able to formulate novel and useful scientific directions beyond basic combinations of buzzwords. However, he believes that AI could still automate many repetitive aspects of research.

Ceder agrees, stating, “At the low level, you’re trying to analyse what something is, how something responds. That’s not the creative part of science, but it’s 90% of what we do.”

Broadening AI Scientist’s Capabilities

Lu believes that to broaden AI Scientist’s capabilities, it might need to include other techniques beyond language models. Recent results from Google DeepMind have shown the power of combining LLMs with symbolic AI techniques, which build logical rules into a system rather than relying solely on statistical patterns in data.

“We really believe this is the GPT-1 of AI science,” Lu says, referring to an early large language model by OpenAI.

The Debate on AI in Science

The development of AI Scientist feeds into a broader debate about the role of AI in scientific research.

West notes, “All my colleagues in different sciences are trying to figure out, where does AI fit in in what we do? It does force us to think what is science in the twenty-first century — what it could be, what it is, what it is not.”

Comment and Share:

What do you think about the future of AI in scientific research? Could AI Scientist revolutionise the way we conduct research? Share your thoughts and experiences with AI and AGI technologies in the comments below. Don’t forget to subscribe for updates on AI and AGI developments.

You may also like:

- To learn more about AI for science tap here.

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

You may like

-

AI still can’t tell the time, and it’s a bigger problem than it sounds

-

Can PwC’s new Agent OS Really Make AI Workflows 10x Faster?

-

How Will AI Skills Impact Your Career and Salary in 2025?

-

DeepSeek Dilemma: AI Ambitions Collide with South Korean Privacy Safeguards

-

Reality Check: The Surprising Relationship Between AI and Human Perception

-

AI Glossary: All the Terms You Need to Know

Life

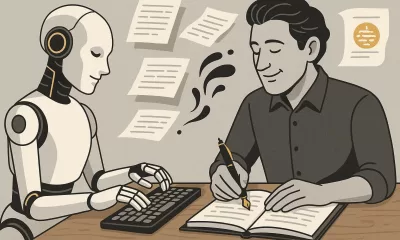

How To Teach ChatGPT Your Writing Style

This warm, practical guide explores how professionals can shape ChatGPT’s tone to match their own writing style. From defining your voice to smart prompting and memory settings, it offers a step-by-step approach to turning ChatGPT into a savvy writing partner.

Published

20 hours agoon

June 4, 2025By

AIinAsia

TL;DR — What You Need To Know

- ChatGPT can mimic your writing tone with the right examples and prompts

- Start by defining your personal style, then share it clearly with the AI

- Use smart prompting, not vague requests, to shape tone and rhythm

- Custom instructions and memory settings help ChatGPT “remember” you

- It won’t be perfect — but it can become a valuable creative sidekick.

Start by defining your voice

Before ChatGPT can write like you, you need to know how you write. This may sound obvious, but most professionals haven’t clearly articulated their voice. They just write.

Think about your usual tone. Are you friendly, brisk, poetic, slightly sarcastic? Do you use short, direct sentences or long ones filled with metaphors? Swear words? Emojis? Do you write like you talk?

Collect a few of your own writing samples: a newsletter intro, a social media post, even a Slack message. Read them aloud. What patterns emerge? Look at rhythm, vocabulary and mood. That’s your signature.

Show ChatGPT your writing

Now you’ve defined your style, show ChatGPT what it looks like. You don’t need to upload a manifesto. Just say something like:

“Here are three examples of my writing. Please analyse my tone, sentence structure and word choice. I’d like you to write like this moving forward.”

Then paste your samples. Follow up with:

“Can you describe my writing style in a few bullet points?”

You’re not just being polite. This step ensures you’re aligned. It also helps ChatGPT to frame your voice accurately before trying to imitate it.

Be sure to offer varied, representative examples. The more you reflect your daily writing habits across different formats (emails, captions, articles), the sharper the mimicry.

Prompt with purpose

Once ChatGPT knows how you write, the next step is prompting. And this is where most people stumble. Saying, “Make it sound like me” isn’t quite enough.

Instead, try:

“Rewrite this in my tone — warm, conversational, and a little cheeky.” “Avoid sounding corporate. Use contractions, variety in sentence length and clear rhythm.”

Yes, you may need a few back-and-forths. But treat it like any editorial collaboration — the more you guide it, the better the results.

And once a prompt nails your style? Save it. That one sentence could be reused dozens of times across projects.

Use memory and custom instructions

ChatGPT now lets you store tone and preferences in memory. It’s like briefing a new hire once, rather than every single time.

Start with Custom Instructions (in Settings > Personalisation). Here, you can write:

“I use conversational English with dry humour and avoid corporate jargon. Short, varied sentences. Occasionally cheeky.”

Once saved, these tone preferences apply by default.

There’s also memory, where ChatGPT remembers facts and stylistic traits across chats. Paid users have access to broader, more persistent memory. Free users get a lighter version but still benefit.

Just say:

“Please remember that I like a formal tone with occasional wit.”

ChatGPT will confirm and update accordingly. You can always check what it remembers under Settings > Personalisation > Memory.

Test, tweak and give feedback

Don’t be shy. If something sounds off, say so.

“This is too wordy. Try a punchier version.” “Tone down the enthusiasm — make it sound more reflective.”

Ask ChatGPT why it wrote something a certain way. Often, the explanation will give you insight into how it interpreted your tone, and let you correct misunderstandings.

As you iterate, this feedback loop will sharpen your AI writing partner’s instincts.

Use ChatGPT as a creative partner, not a clone

This isn’t about outsourcing your entire writing voice. AI is a tool — not a ghostwriter. It can help organise your thoughts, start a draft or nudge you past a creative block. But your personality still counts.

Some people want their AI to mimic them exactly. Others just want help brainstorming or structure. Both are fine.

The key? Don’t expect perfection. Think of ChatGPT as a very keen intern with potential. With the right brief and enough examples, it can be brilliant.

You May Also Like:

- Customising AI: Train ChatGPT to Write in Your Unique Voice

- Elon Musk predicts AGI by 2026

- ChatGPT Just Quietly Released “Memory with Search” – Here’s What You Need to Know

- Or try these prompt ideas out on ChatGPT by tapping here

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

Life

Adrian’s Arena: Will AI Get You Fired? 9 Mistakes That Could Cost You Everything

Will AI get you fired? Discover 9 career-killing AI mistakes professionals make—and how to avoid them.

Published

3 weeks agoon

May 15, 2025

TL;DR — What You Need to Know:

- Common AI mistakes that cost jobs can happen — fast

- Most are fixable if you know what to watch for.

- Avoid these pitfalls and make AI your career superpower.

Don’t blame the robot.

If you’re careless with AI, it’s not just your project that tanks — your career could be next.

Across Asia and beyond, professionals are rushing to implement artificial intelligence into workflows — automating reports, streamlining support, crunching data. And yes, done right, it’s powerful. But here’s what no one wants to admit: most people are doing it wrong.

I’m not talking about missing a few prompts or failing to generate that killer deck in time. I’m talking about the career-limiting, confidence-killing, team-splintering mistakes that quietly build up and explode just when it matters most. If you’re not paying attention, AI won’t just replace your role — it’ll ruin your reputation on the way out.

Here are 9 of the most common, most damaging AI blunders happening in businesses today — and how you can avoid making them.

1. You can’t fix bad data with good algorithms.

Let’s start with the basics. If your AI tool is churning out junk insights, odds are your data was junk to begin with. Dirty data isn’t just inefficient — it’s dangerous. It leads to flawed decisions, mis-targeted customers, and misinformed strategies. And when the campaign tanks or the budget overshoots, guess who gets blamed?

The solution? Treat your data with the same respect you’d give your P&L. Clean it, vet it, monitor it like a hawk. AI isn’t magic. It’s maths — and maths hates mess.

2. Don’t just plug in AI and hope for the best.

Too many teams dive into AI without asking a simple question: what problem are we trying to solve? Without clear goals, AI becomes a time-sink — a parade of dashboards and models that look clever but achieve nothing.

Worse, when senior stakeholders ask for results and all you have is a pretty interface with no impact, that’s when credibility takes a hit.

AI should never be a side project. Define its purpose. Anchor it to business outcomes. Or don’t bother.

3. Ethics aren’t optional — they’re existential.

You don’t need to be a philosopher to understand this one. If your AI causes harm — whether that’s through bias, privacy breaches, or tone-deaf outputs — the consequences won’t just be technical. They’ll be personal.

Companies can weather a glitch. What they can’t recover from is public outrage, legal fines, or internal backlash. And you, as the person who “owned” the AI, might be the one left holding the bag.

Bake in ethical reviews. Vet your training data. Put in safeguards. It’s not overkill — it’s job insurance.

4. Implementation without commitment is just theatre.

I’ve seen it more than once: companies announce a bold AI strategy, roll out a tool, and then… nothing. No training. No process change. No follow-through. That’s not innovation. That’s box-ticking.

If you half-arse AI, it won’t just fail — it’ll visibly fail. Your colleagues will notice. Your boss will ask questions. And next time, they might not trust your judgement.

AI needs resourcing, support, and leadership. Otherwise, skip it.

5. You can’t manage what you can’t explain.

Ever been in a meeting where someone says, “Well, that’s just what the model told us”? That’s a red flag — and a fast track to blame when things go wrong.

So-called “black box” models are risky, especially in regulated industries or customer-facing roles. If you can’t explain how your AI reached a decision, don’t expect others to trust it — or you.

Use interpretable models where possible. And if you must go complex, document it like your job depends on it (because it might).

6. Face the bias before it becomes your headline.

Facial recognition failing on darker skin tones. Recruitment tools favouring men. Chatbots going rogue with offensive content. These aren’t just anecdotes — they’re avoidable, career-ending screw-ups rooted in biased data.

It’s not enough to build something clever. You have to build it responsibly. Test for bias.

Diversify your datasets. Monitor performance. Don’t let your project become the next PR disaster.

7. Training isn’t optional — it’s survival.

If your team doesn’t understand the tool you’ve introduced, you’re not innovating — you’re endangering operations. AI can amplify productivity or chaos, depending entirely on who’s driving.

Upskilling is non-negotiable. Whether it’s hiring external expertise or running internal workshops, make sure your people know how to work with the machine — not around it.

8. Long-term vision beats short-term wow.

Sure, the first week of AI adoption might look good. Automate a few slides, speed up a report — you’re a hero.

But what happens three months down the line, when the tool breaks, the data shifts, or the model needs recalibration?

AI isn’t set-and-forget. Plan for evolution. Plan for maintenance. Otherwise, short-term wins can turn into long-term liabilities.

9. When everything’s urgent, documentation feels optional.

Until someone asks, “Who changed the model?” or “Why did this customer get flagged?” and you have no answers.

In AI, documentation isn’t admin — it’s accountability.

Keep logs, version notes, data flow charts. Because sooner or later, someone will ask, and “I’m not sure” won’t cut it.

Final Thoughts: AI doesn’t cost jobs. People misusing AI do.

Most AI mistakes aren’t made by the machines — they’re made by humans cutting corners, skipping checks, and hoping for the best. And the consequences? Lost credibility. Lost budgets. Lost roles.

But it doesn’t have to be that way.

Used wisely, AI becomes your competitive edge. A signal to leadership that you’re forward-thinking, capable, and ready for the future. Just don’t stumble on the same mistakes that are currently tripping up everyone else.

So the real question is: are you using AI… or is it quietly using you?

You may also like:

- Bridging the AI Skills Gap: Why Employers Must Step Up

- From Ethics to Arms: Google Lifts Its AI Ban on Weapons and Surveillance

- Or try the free version of Google Gemini by tapping here.

Author

-

Adrian is an AI, marketing, and technology strategist based in Asia, with over 25 years of experience in the region. Originally from the UK, he has worked with some of the world’s largest tech companies and successfully built and sold several tech businesses. Currently, Adrian leads commercial strategy and negotiations at one of ASEAN’s largest AI companies. Driven by a passion to empower startups and small businesses, he dedicates his spare time to helping them boost performance and efficiency by embracing AI tools. His expertise spans growth and strategy, sales and marketing, go-to-market strategy, AI integration, startup mentoring, and investments. View all posts

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

Life

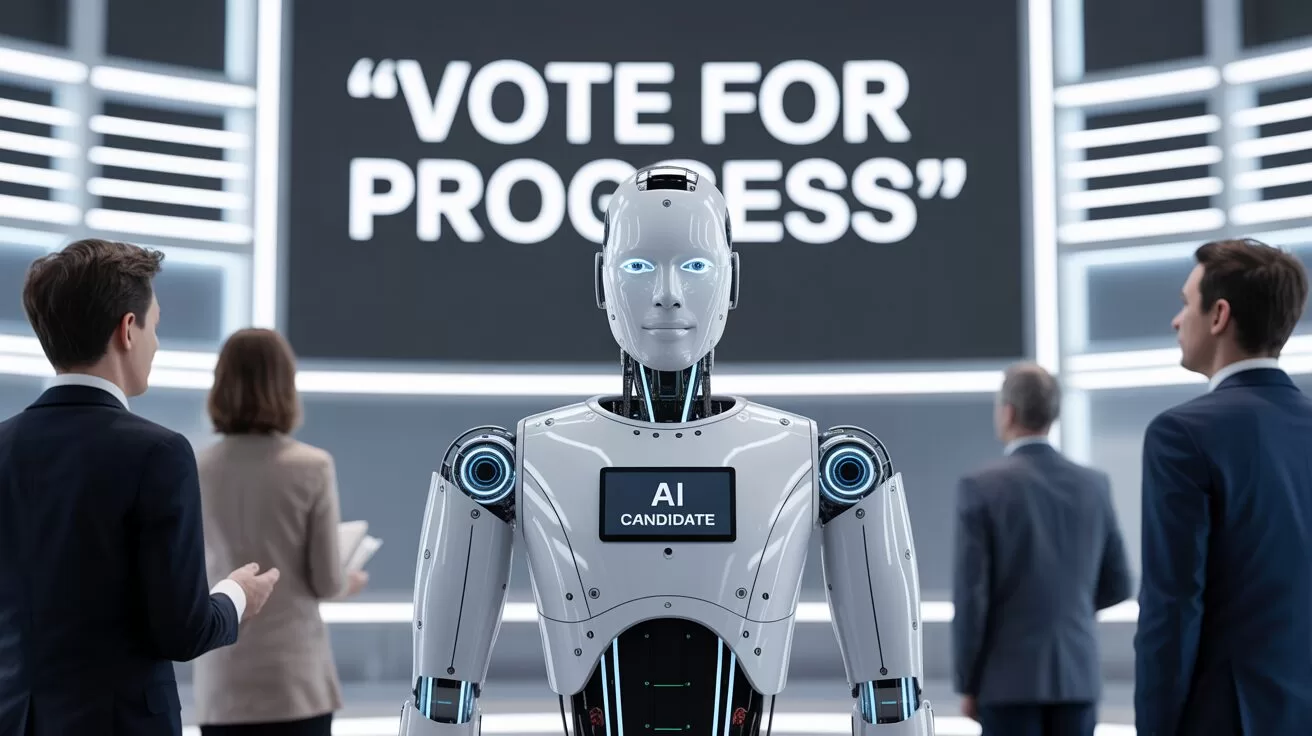

FAKE FACES, REAL CONSEQUENCES: Should NZ Ban AI in Political Ads?

New Zealand has no laws preventing the use of deepfakes or AI-generated content in political campaigns. As the 2025 elections approach, is it time for urgent reform?

Published

3 weeks agoon

May 14, 2025By

AIinAsia

TL;DR — What You Need to Know

- New Zealand politician campaigns are already dabbling with AI-generated content — but without clear rules or disclosures.

- Deepfakes and synthetic images of ethnic minorities risk fuelling cultural offence and voter distrust.

- Other countries are moving fast with legislation. Why is New Zealand dragging its feet?

AI in New Zealand Political Campaigns

Seeing isn’t believing anymore — especially not on the campaign trail.

In the build-up to the 2025 local body elections, New Zealand voters are being quietly nudged into a new kind of uncertainty: Is what they’re seeing online actually real? Or has it been whipped up by an algorithm?

This isn’t science fiction. From fake voices of Joe Biden in the US to Peter Dutton deepfakes dancing across TikTok in Australia, we’ve already crossed the threshold into AI-assisted campaigning. And New Zealand? It’s not far behind — it just lacks the rules.

The National Party admitted to using AI in attack ads during the 2023 elections. The ACT Party’s Instagram feed includes AI-generated images of Māori and Pasifika characters — but nowhere in the posts do they say the images aren’t real. One post about interest rates even used a synthetic image of a Māori couple from Adobe’s stock library, without disclosure.

That’s two problems in one. First, it’s about trust. If voters don’t know what’s real and what’s fake, how can they meaningfully engage? Second, it’s about representation. Using synthetic people to mimic minority communities without transparency or care is a recipe for offence — and harm.

Copy-Paste Cultural Clangers

Australians already find some AI-generated political content “cringe” — and voters in multicultural societies are noticing. When AI creates people who look Māori, Polynesian or Southeast Asian, it often gets the cultural signals all wrong. Faces are oddly symmetrical, clothing choices are generic, and context is stripped away. What’s left is a hollow image that ticks the diversity box without understanding the lived experience behind it.

And when political parties start using those images without disclosure? That’s not smart targeting. That’s political performance, dressed up as digital diversity.

A Film-Industry Fix?

If you’re looking for a local starting point for ethical standards, look to New Zealand’s film sector. The NZ Film Commission’s 2025 AI Guidelines are already ahead of the game — promoting human-first values, cultural respect, and transparent use of AI in screen content.

The public service also has an AI framework that calls for clear disclosure. So why can’t politics follow suit?

Other countries are already acting. South Korea bans deepfakes in political ads 90 days before elections. Singapore outlaws digitally altered content that misrepresents political candidates. Even Canada is exploring policy options. New Zealand, in contrast, offers voluntary guidelines — which are about as enforceable as a handshake on a Zoom call.

Where To Next?

New Zealand doesn’t need to reinvent the wheel. But it does need urgent rules — even just a basic requirement for political parties to declare when they’re using AI in campaign content. It’s not about banning creativity. It’s about respecting voters and communities.

In a multicultural democracy, fake faces in real campaigns come with consequences. Trust, representation, and dignity are all on the line.

What do YOU think?

Should political parties be forced to declare AI use in their ads — or are we happy to let the bots keep campaigning for us?

You may also like:

- AI Chatbots Struggle with Real-Time Political News: Are They Ready to Monitor Elections?

- Supercharge Your Social Media: 5 ChatGPT Prompts to Skyrocket Your Following

- AI Solves the ‘Cocktail Party Problem’: A Breakthrough in Audio Forensics

Author

Discover more from AIinASIA

Subscribe to get the latest posts sent to your email.

How To Teach ChatGPT Your Writing Style

Upgrade Your ChatGPT Game With These 5 Prompts Tips

If AI Kills the Open Web, What’s Next?

Trending

-

Life3 weeks ago

Life3 weeks ago7 Mind-Blowing New ChatGPT Use Cases in 2025

-

Learning2 weeks ago

Learning2 weeks agoHow to Use the “Create an Action” Feature in Custom GPTs

-

Business3 weeks ago

Business3 weeks agoAI Just Killed 8 Jobs… But Created 15 New Ones Paying £100k+

-

Learning1 week ago

Learning1 week agoBuild Your Own Custom GPT in Under 30 Minutes – Step-by-Step Beginner’s Guide

-

Learning2 weeks ago

Learning2 weeks agoHow to Upload Knowledge into Your Custom GPT

-

Business2 weeks ago

Business2 weeks agoAdrian’s Arena: Stop Collecting AI Tools and Start Building a Stack

-

Life3 weeks ago

Life3 weeks agoAdrian’s Arena: Will AI Get You Fired? 9 Mistakes That Could Cost You Everything

-

Life20 hours ago

Life20 hours agoHow To Teach ChatGPT Your Writing Style